The intent of this workshop is to educate users about EKS Anywhere and its different use cases. As part of this workshop we also covering how to provision and manage EKS Anywhere clusters, run workloads and leverage observability tools like Prometheus and Grafana to monitor the EKS Anywhere cluster. We recommend this workshop for Cloud Architects, SREs, DevOps engineers, and other IT Professionals.

This is the multi-page printable view of this section. Click here to print.

Welcome to EKS Anywhere Workshop!

- 1: Introduction

- 1.1: Overview

- 1.2: Benefits & Use cases

- 1.3: Customer FAQ

- 2: Provisioning

- 2.1: Overview

- 2.2: Admin machine setup

- 2.3: Local cluster setup

- 2.4: Preparing needed for hosting EKS Anywhere on vSphere

- 2.5: vSphere cluster

- 3: Curated Packages Workshop

- 3.1: Prometheus use cases

- 3.1.1: Prometheus with Grafana

- 3.2: ADOT use cases

- 3.2.1: ADOT with AMP and AMG

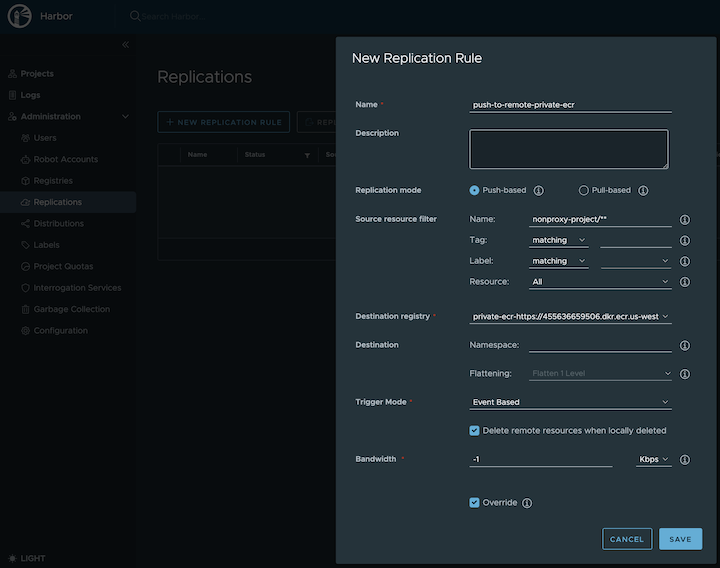

- 3.3: Harbor use cases

1 - Introduction

The following topics are covered part of this chapter:

- EKS Anywhere service overview

- Benefits & service considerations

- Frequently asked questions (FAQs)

1.1 - Overview

What is the purpose of this workshop?

The purpose of this workshop is to provide a more perscriptive walkthrough of building, deploying, and operating an EKS Anywhere cluster. This will use existing content from the documentation, just in a more condensed format for those wishing to get started.

EKS Anywhere Overview

Amazon EKS Anywhere is a new deployment option for Amazon EKS that allows customers to create and operate Kubernetes clusters on customer-managed infrastructure, supported by AWS. Customers can now run Amazon EKS Anywhere on their own on-premises infrastructure using Bare Metal, CloudStack, or VMware vSphere.

Amazon EKS Anywhere helps simplify the creation and operation of on-premises Kubernetes clusters with default component configurations while providing tools for automating cluster management. It builds on the strengths of Amazon EKS Distro: the same Kubernetes distribution that powers Amazon EKS on AWS. AWS supports all Amazon EKS Anywhere components including the integrated 3rd-party software, so that customers can reduce their support costs and avoid maintenance of redundant open-source and third-party tools. In addition, Amazon EKS Anywhere gives customers on-premises Kubernetes operational tooling that’s consistent with Amazon EKS. You can leverage the EKS console to view all of your Kubernetes clusters (including EKS Anywhere clusters) running anywhere, through the EKS Connector (public preview)

1.2 - Benefits & Use cases

Here are some key customer benefits of using Amazon EKS Anywhere:

- Simplify on-premises Kubernetes management - Amazon EKS Anywhere helps simplify the creation and operation of on-premises Kubernetes clusters with default component configurations while providing tools for automating cluster management.

- One stop support - AWS supports all Amazon EKS Anywhere components including the integrated 3rd-party software, so that customers can reduce their support costs and avoid maintenance of redundant open-source and third-party tools.

- Consistent and reliable - Amazon EKS Anywhere gives you on-premises Kubernetes operational tooling that’s consistent with Amazon EKS. It builds on the strengths of Amazon EKS Distro and provides open-source software that’s up-to-date and patched, so you can have a Kubernetes environment on-premises that is more reliable than self-managed Kubernetes offerings.

Use-cases supported by EKS Anywhere

EKS Anywhere is suitable for the following use-cases:

- Hybrid cloud consistency - You may have lots of Kubernetes workloads on Amazon EKS but also need to operate Kubernetes clusters on-premises. Amazon EKS Anywhere offers strong operational consistency with Amazon EKS so you can standardize your Kubernetes operations based on a unified toolset.

- Disconnected environment - You may need to secure your applications in disconnected environment or run applications in areas without internet connectivity. Amazon EKS Anywhere allows you to deploy and operate highly-available clusters with the same Kubernetes distribution that powers Amazon EKS on AWS.

- Application modernization - Amazon EKS Anywhere empowers you to modernize your on-premises applications, removing the heavy lifting of keeping up with upstream Kubernetes and security patches, so you can focus on your core business value.

- Data sovereignty - You may want to keep your large data sets on-premises due to legal requirements concerning the location of the data. Amazon EKS Anywhere brings the trusted Amazon EKS Kubernetes distribution and tools to where your data needs to be.

1.3 - Customer FAQ

AuthN / AuthZ

How do my applications running on EKS Anywhere authenticate with AWS services using IAM credentials?

You can now leverage the IAM Role for Service Account (IRSA)

feature by following the IRSA reference

guide for details.

Does EKS Anywhere support OIDC (including Azure AD and AD FS)?

Yes, EKS Anywhere can create clusters that support API server OIDC authentication. This means you can federate authentication through AD FS locally or through Azure AD, along with other IDPs that support the OIDC standard. In order to add OIDC support to your EKS Anywhere clusters, you need to configure your cluster by updating the configuration file before creating the cluster. Please see the OIDC reference

for details.

Does EKS Anywhere support LDAP?

EKS Anywhere does not support LDAP out of the box. However, you can look into the Dex LDAP Connector

.

Can I use AWS IAM for Kubernetes resource access control on EKS Anywhere?

Yes, you can install the aws-iam-authenticator

on your EKS Anywhere cluster to achieve this.

Miscellaneous

How much does EKS Anywhere cost?

EKS Anywhere is free, open source software that you can download, install on your existing hardware, and run in your own data centers. It includes management and CLI tooling for all supported cluster topologies

on all supported providers

. You are responsible for providing infrastructure where EKS Anywhere runs (e.g. VMware, bare metal), and some providers require third party hardware and software contracts.

The EKS Anywhere Enterprise Subscription

provides access to curated packages and enterprise support. This is an optional—but recommended—cost based on how many clusters and how many years of support you need.

Can I connect my EKS Anywhere cluster to EKS?

Yes, you can install EKS Connector to connect your EKS Anywhere cluster to AWS EKS. EKS Connector is a software agent that you can install on the EKS Anywhere cluster that enables the cluster to communicate back to AWS. Once connected, you can immediately see a read-only view of the EKS Anywhere cluster with workload and cluster configuration information on the EKS console, alongside your EKS clusters.

How does the EKS Connector authenticate with AWS?

During start-up, the EKS Connector generates and stores an RSA key-pair as Kubernetes secrets. It also registers with AWS using the public key and the activation details from the cluster registration configuration file. The EKS Connector needs AWS credentials to receive commands from AWS and to send the response back. Whenever it requires AWS credentials, it uses its private key to sign the request and invokes AWS APIs to request the credentials.

How does the EKS Connector authenticate with my Kubernetes cluster?

The EKS Connector acts as a proxy and forwards the EKS console requests to the Kubernetes API server on your cluster. In the initial release, the connector uses impersonation

with its service account secrets to interact with the API server. Therefore, you need to associate the connector’s service account with a ClusterRole, which gives permission to impersonate AWS IAM entities.

How do I enable an AWS user account to view my connected cluster through the EKS console?

For each AWS user or other IAM identity, you should add cluster role binding to the Kubernetes cluster with the appropriate permission for that IAM identity. Additionally, each of these IAM entities should be associated with the IAM policy to invoke the EKS Connector on the cluster.

Can I use Amazon Controllers for Kubernetes (ACK) on EKS Anywhere?

Yes, you can leverage AWS services from your EKS Anywhere clusters on-premises through Amazon Controllers for Kubernetes (ACK)

.

Can I deploy EKS Anywhere on other clouds?

EKS Anywhere can be installed on any infrastructure with the required Bare Metal, Cloudstack, or VMware vSphere components. See EKS Anywhere Baremetal

, or vSphere

documentation.

How is EKS Anywhere different from ECS Anywhere?

is an option for Amazon Elastic Container Service (ECS)

to run containers on your on-premises infrastructure. The ECS Anywhere Control Plane runs in an AWS region and allows you to install the ECS agent on worker nodes that run outside of an AWS region. Workloads that run on ECS Anywhere nodes are scheduled by ECS. You are not responsible for running, managing, or upgrading the ECS Control Plane.

EKS Anywhere runs the Kubernetes Control Plane and worker nodes on your infrastructure. You are responsible for managing the EKS Anywhere Control Plane and worker nodes. There is no requirement to have an AWS account to run EKS Anywhere.

If you’d like to see how EKS Anywhere compares to EKS please see the information here.

How can I manage EKS Anywhere at scale?

You can perform cluster life cycle and configuration management at scale through GitOps-based tools. EKS Anywhere offers git-driven cluster management through the integrated Flux Controller. See Manage cluster with GitOps

documentation for details.

Can I run EKS Anywhere on ESXi?

No. EKS Anywhere is only supported on providers listed on the Create production cluster

page. There would need to be a change to the upstream project to support ESXi.

Can I deploy EKS Anywhere on a single node?

Yes. Single node cluster deployment is supported for Bare Metal. See workerNodeGroupConfigurations

2 - Provisioning

This chapter walks through the following:

- Overview of provisioning

- Prerequisites for creating an EKS Anywhere cluster

- Provisioning a new EKS Anywhere cluster

- Verifying the cluster installation

2.1 - Overview

EKS Anywhere creates a Kubernetes cluster on premises to a chosen provider. Supported providers include Bare Metal (via Tinkerbell), CloudStack, and vSphere. To manage that cluster, you can run cluster create and delete commands from an Ubuntu or Mac Administrative machine.

Creating a cluster involves downloading EKS Anywhere tools to an Administrative machine, then running the eksctl anywhere create cluster command to deploy that cluster to the provider.

A temporary bootstrap cluster runs on the Administrative machine to direct the target cluster creation.

For a detailed description, see Cluster creation workflow

.

Here’s a diagram that explains the process visually.

EKS Anywhere Create Cluster

Next steps:

2.2 - Admin machine setup

EKS Anywhere will create and manage Kubernetes clusters on multiple providers. Currently we support creating development clusters locally using Docker and production clusters from providers listed on the Create production cluster

page.

Creating an EKS Anywhere cluster begins with setting up an Administrative machine where you will run Docker and add some binaries. From there, you create the cluster for your chosen provider. See Create cluster workflow

for an overview of the cluster creation process.

To create an EKS Anywhere cluster you will need eksctl

and the eksctl-anywhere plugin.

This will let you create a cluster in multiple providers for local development or production workloads.

NOTE: For Snow provider, the Snow devices will come with a pre-configured Admin AMI which can be used to create an Admin instance with all the necessary binaries, dependencies and artifacts to create an EKS Anywhere cluster. Skip the below steps and see Create Snow production cluster

to get started with EKS Anywhere on Snow.

Administrative machine prerequisites

- Docker 20.x.x

- Mac OS 10.15 / Ubuntu 20.04.2 LTS (See Note on newer Ubuntu versions)

- 4 CPU cores

- 16GB memory

- 30GB free disk space

- Administrative machine must be on the same Layer 2 network as the cluster machines (Bare Metal provider only).

If you are using Ubuntu, use the Docker CE installation instructions to install Docker and not the Snap installation, as described here.

If you are using Ubuntu 21.10 or 22.04, you will need to switch from cgroups v2 to cgroups v1. For details, see Troubleshooting Guide.

If you are using Docker Desktop, you need to know that:

- For EKS Anywhere Bare Metal, Docker Desktop is not supported

- For EKS Anywhere vSphere, if you are using Mac OS Docker Desktop 4.4.2 or newer

"deprecatedCgroupv1": truemust be set in~/Library/Group\ Containers/group.com.docker/settings.json.

Install EKS Anywhere CLI tools

Via Homebrew (macOS and Linux)

Warning

EKS Anywhere only works on computers with x86 and amd64 process architecture.

It currently will not work on computers with Apple Silicon or Arm based processors.

You can install eksctl and eksctl-anywhere with homebrew

.

This package will also install kubectl and the aws-iam-authenticator which will be helpful to test EKS Anywhere clusters.

brew install aws/tap/eks-anywhere

Manually (macOS and Linux)

Install the latest release of eksctl.

The EKS Anywhere plugin requires eksctl version 0.66.0 or newer.

curl "https://github.com/weaveworks/eksctl/releases/latest/download/eksctl_$(uname -s)_amd64.tar.gz" \

--silent --location \

| tar xz -C /tmp

sudo mv /tmp/eksctl /usr/local/bin/

Install the eksctl-anywhere plugin.

RELEASE_VERSION=$(curl https://anywhere-assets.eks.amazonaws.com/releases/eks-a/manifest.yaml --silent --location | yq ".spec.latestVersion")

EKS_ANYWHERE_TARBALL_URL=$(curl https://anywhere-assets.eks.amazonaws.com/releases/eks-a/manifest.yaml --silent --location | yq ".spec.releases[] | select(.version==\"$RELEASE_VERSION\").eksABinary.$(uname -s | tr A-Z a-z).uri")

curl $EKS_ANYWHERE_TARBALL_URL \

--silent --location \

| tar xz ./eksctl-anywhere

sudo mv ./eksctl-anywhere /usr/local/bin/

Install the kubectl Kubernetes command line tool.

This can be done by following the instructions here

.

Or you can install the latest kubectl directly with the following.

export OS="$(uname -s | tr A-Z a-z)" ARCH=$(test "$(uname -m)" = 'x86_64' && echo 'amd64' || echo 'arm64')

curl -LO "https://dl.k8s.io/release/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/${OS}/${ARCH}/kubectl"

sudo mv ./kubectl /usr/local/bin

sudo chmod +x /usr/local/bin/kubectl

Upgrade eksctl-anywhere

If you installed eksctl-anywhere via homebrew you can upgrade the binary with

brew update

brew upgrade aws/tap/eks-anywhere

If you installed eksctl-anywhere manually you should follow the installation steps to download the latest release.

You can verify your installed version with

eksctl anywhere version

Prepare for airgapped deployments (optional)

When creating an EKS Anywhere cluster, there may be times where you need to do so in an airgapped environment. In this type of environment, cluster nodes are connected to the Admin Machine, but not to the internet. In order to download images and artifacts, however, the Admin machine needs to be temporarily connected to the internet.

An airgapped environment is especially important if you require the most secure networks. EKS Anywhere supports airgapped installation for creating clusters using a registry mirror. For airgapped installation to work, the Admin machine must have:

- Temporary access to the internet to download images and artifacts

- Ample space (80 GB or more) to store artifacts locally

To create a cluster in an airgapped environment, perform the following:

-

Download the artifacts and images that will be used by the cluster nodes to the Admin machine using the following command:

eksctl anywhere download artifactsA compressed file

eks-anywhere-downloads.tar.gzwill be downloaded. -

To decompress this file, use the following command:

tar -xvf eks-anywhere-downloads.tar.gzThis will create an eks-anywhere-downloads folder that we’ll be using later.

-

In order for the next command to run smoothly, ensure that Docker has been pre-installed and is running. Then run the following:

eksctl anywhere download images -o images.tarFor the remaining steps, the Admin machine no longer needs to be connected to the internet or the bastion host.

-

Next, you will need to set up a local registry mirror to host the downloaded EKS Anywhere images. In order to set one up, refer to Registry Mirror configuration.

-

Now that you’ve configured your local registry mirror, you will need to import images to the local registry mirror using the following command (be sure to replace

with the url of the local registry mirror you created in step 4): eksctl anywhere import images -i images.tar -r <registryUrl> \ -- bundles ./eks-anywhere-downloads/bundle-release.yaml

You are now ready to deploy a cluster by following instructions to Create local cluster

See text below for specific provider instructions.

For Bare Metal (Tinkerbell)

You will need to have hookOS and its OS artifacts downloaded and served locally from an HTTP file server. You will also need to modify the hookImagesURLPath

and the osImageURL

in the cluster configuration files. Ensure that structure of the files is set up as described in hookImagesURLPath.

For vSphere

If you are using the vSphere provider, be sure that the requirements in the Prerequisite checklist

have been met.

Deploy a cluster

Once you have the tools installed you can deploy a local cluster or production cluster in the next steps.

2.3 - Local cluster setup

EKS Anywhere docker provider deployments

EKS Anywhere supports a Docker provider for development and testing use cases only. This allows you to try EKS Anywhere on your local system before deploying to a supported provider to create either:

- A single, standalone cluster or

- Multiple management/workload clusters on the same provider, as described in Cluster topologies

. The management/workload topology is recommended for production clusters and can be tried out here using both

eksctlandGitOpstools.

Create a standalone cluster

Prerequisite Checklist

To install the EKS Anywhere binaries and see system requirements please follow the installation guide

.

Steps

-

Generate a cluster config

CLUSTER_NAME=mgmt eksctl anywhere generate clusterconfig $CLUSTER_NAME \ --provider docker > $CLUSTER_NAME.yamlThe command above creates a file named eksa-cluster.yaml with the contents below in the path where it is executed. The configuration specification is divided into two sections:

- Cluster

- DockerDatacenterConfig

apiVersion: anywhere.eks.amazonaws.com/v1alpha1 kind: Cluster metadata: name: mgmt spec: clusterNetwork: cniConfig: cilium: {} pods: cidrBlocks: - 192.168.0.0/16 services: cidrBlocks: - 10.96.0.0/12 controlPlaneConfiguration: count: 1 datacenterRef: kind: DockerDatacenterConfig name: mgmt externalEtcdConfiguration: count: 1 kubernetesVersion: "1.25" managementCluster: name: mgmt workerNodeGroupConfigurations: - count: 1 name: md-0 --- apiVersion: anywhere.eks.amazonaws.com/v1alpha1 kind: DockerDatacenterConfig metadata: name: mgmt spec: {} -

Configure Curated Packages

The Amazon EKS Anywhere Curated Packages are only available to customers with the Amazon EKS Anywhere Enterprise Subscription. To request a free trial, talk to your Amazon representative or connect with one here

. Cluster creation will succeed if authentication is not set up, but some warnings may be generated. Detailed package configurations can be found here

.

If you are going to use packages, set up authentication. These credentials should have limited capabilities

:

export EKSA_AWS_ACCESS_KEY_ID="your*access*id" export EKSA_AWS_SECRET_ACCESS_KEY="your*secret*key" export EKSA_AWS_REGION="us-west-2"NOTE: The Amazon EKS Anywhere Curated Packages are only available to customers with the Amazon EKS Anywhere Enterprise Subscription. Due to this there might be some warnings in the CLI if proper authentication is not set up.

-

Create Cluster:

For a regular cluster create (with internet access), type the following:

eksctl anywhere create cluster \ # --install-packages packages.yaml \ # uncomment to install curated packages at cluster creation -f $CLUSTER_NAME.yamlFor an airgapped cluster create, follow Preparation for airgapped deployments

instructions, then type the following:

eksctl anywhere create cluster # --install-packages packages.yaml \ # uncomment to install curated packages at cluster creation -f $CLUSTER_NAME.yaml \ --bundles-override ./eks-anywhere-downloads/bundle-release.yamlExample command output

Performing setup and validations ✅ validation succeeded {"validation": "docker Provider setup is valid"} Creating new bootstrap cluster Installing cluster-api providers on bootstrap cluster Provider specific setup Creating new workload cluster Installing networking on workload cluster Installing cluster-api providers on workload cluster Moving cluster management from bootstrap to workload cluster Installing EKS-A custom components (CRD and controller) on workload cluster Creating EKS-A CRDs instances on workload cluster Installing GitOps Toolkit on workload cluster GitOps field not specified, bootstrap flux skipped Deleting bootstrap cluster 🎉 Cluster created! ---------------------------------------------------------------------------------- The Amazon EKS Anywhere Curated Packages are only available to customers with the Amazon EKS Anywhere Enterprise Subscription ---------------------------------------------------------------------------------- Installing curated packages controller on management cluster secret/aws-secret created job.batch/eksa-auth-refresher createdNOTE: to install curated packages during cluster creation, use

--install-packages packages.yamlflag -

Use the cluster

Once the cluster is created you can use it with the generated

KUBECONFIGfile in your local directoryexport KUBECONFIG=${PWD}/${CLUSTER_NAME}/${CLUSTER_NAME}-eks-a-cluster.kubeconfig kubectl get nsExample command output

NAME STATUS AGE capd-system Active 21m capi-kubeadm-bootstrap-system Active 21m capi-kubeadm-control-plane-system Active 21m capi-system Active 21m capi-webhook-system Active 21m cert-manager Active 22m default Active 23m eksa-packages Active 23m eksa-system Active 20m kube-node-lease Active 23m kube-public Active 23m kube-system Active 23mYou can now use the cluster like you would any Kubernetes cluster. Deploy the test application with:

kubectl apply -f "https://anywhere.eks.amazonaws.com/manifests/hello-eks-a.yaml"Verify the test application in the deploy test application section

.

Create management/workload clusters

To try the recommended EKS Anywhere topology,

you can create a management cluster and one or more workload clusters on the same Docker provider.

Prerequisite Checklist

To install the EKS Anywhere binaries and see system requirements please follow the installation guide

.

Create a management cluster

-

Generate a management cluster config (named

mgmtfor this example):CLUSTER_NAME=mgmt eksctl anywhere generate clusterconfig $CLUSTER_NAME \ --provider docker > eksa-mgmt-cluster.yaml -

Modify the management cluster config (

eksa-mgmt-cluster.yaml) you could use the same one described earlier or modify it to use GitOps, as shown below:apiVersion: anywhere.eks.amazonaws.com/v1alpha1 kind: Cluster metadata: name: mgmt namespace: default spec: bundlesRef: apiVersion: anywhere.eks.amazonaws.com/v1alpha1 name: bundles-1 namespace: eksa-system clusterNetwork: cniConfig: cilium: {} pods: cidrBlocks: - 192.168.0.0/16 services: cidrBlocks: - 10.96.0.0/12 controlPlaneConfiguration: count: 1 datacenterRef: kind: DockerDatacenterConfig name: mgmt externalEtcdConfiguration: count: 1 gitOpsRef: kind: FluxConfig name: mgmt kubernetesVersion: "1.25" managementCluster: name: mgmt workerNodeGroupConfigurations: - count: 1 name: md-1

apiVersion: anywhere.eks.amazonaws.com/v1alpha1 kind: DockerDatacenterConfig metadata: name: mgmt namespace: default spec: {}

apiVersion: anywhere.eks.amazonaws.com/v1alpha1 kind: FluxConfig metadata: name: mgmt namespace: default spec: branch: main clusterConfigPath: clusters/mgmt github: owner: <your github account, such as example for https://github.com/example> personal: true repository: <your github repo, such as test for https://github.com/example/test> systemNamespace: flux-system

-

Configure Curated Packages

The Amazon EKS Anywhere Curated Packages are only available to customers with the Amazon EKS Anywhere Enterprise Subscription. To request a free trial, talk to your Amazon representative or connect with one here

. Cluster creation will succeed if authentication is not set up, but some warnings may be generated. Detailed package configurations can be found here

.

If you are going to use packages, set up authentication. These credentials should have limited capabilities

:

export EKSA_AWS_ACCESS_KEY_ID="your*access*id" export EKSA_AWS_SECRET_ACCESS_KEY="your*secret*key" -

Create cluster:

For a regular cluster create (with internet access), type the following:

eksctl anywhere create cluster \ # --install-packages packages.yaml \ # uncomment to install curated packages at cluster creation -f $CLUSTER_NAME.yamlFor an airgapped cluster create, follow Preparation for airgapped deployments

instructions, then type the following:

eksctl anywhere create cluster \ # --install-packages packages.yaml \ # uncomment to install curated packages at cluster creation -f $CLUSTER_NAME.yaml \ --bundles-override ./eks-anywhere-downloads/bundle-release.yaml -

Once the cluster is created you can use it with the generated

KUBECONFIGfile in your local directory:export KUBECONFIG=${PWD}/${CLUSTER_NAME}/${CLUSTER_NAME}-eks-a-cluster.kubeconfig -

Check the initial cluster’s CRD:

To ensure you are looking at the initial cluster, list the CRD to see that the name of its management cluster is itself:

kubectl get clusters mgmt -o yamlExample command output

... kubernetesVersion: "1.25" managementCluster: name: mgmt workerNodeGroupConfigurations: ...

Create separate workload clusters

Follow these steps to have your management cluster create and manage separate workload clusters.

-

Generate a workload cluster config:

CLUSTER_NAME=w01 eksctl anywhere generate clusterconfig $CLUSTER_NAME \ --provider docker > eksa-w01-cluster.yamlRefer to the initial config described earlier for the required and optional settings.

NOTE: Ensure workload cluster object names (

Cluster,DockerDatacenterConfig, etc.) are distinct from management cluster object names. Be sure to set themanagementClusterfield to identify the name of the management cluster. -

Create a workload cluster in one of the following ways:

-

GitOps: See Manage separate workload clusters with GitOps

-

Terraform: See Manage separate workload clusters with Terraform

-

Kubernetes CLI: The cluster lifecycle feature lets you use

kubectlto manage a workload cluster. For example:kubectl apply -f eksa-w01-cluster.yaml -

eksctl CLI: Useful for temporary cluster configurations. To create a workload cluster with

eksctl, do one of the following. For a regular cluster create (with internet access), type the following:eksctl anywhere create cluster \ -f eksa-w01-cluster.yaml \ # --install-packages packages.yaml \ # uncomment to install curated packages at cluster creation --kubeconfig mgmt/mgmt-eks-a-cluster.kubeconfigFor an airgapped cluster create, follow Preparation for airgapped deployments

instructions, then type the following:

eksctl create cluster \ # --install-packages packages.yaml \ # uncomment to install curated packages at cluster creation -f $CLUSTER_NAME.yaml \ --bundles-override ./eks-anywhere-downloads/bundle-release.yaml \ --kubeconfig mgmt/mgmt-eks-a-cluster.kubeconfigAs noted earlier, adding the

--kubeconfigoption tellseksctlto use the management cluster identified by that kubeconfig file to create a different workload cluster.

-

-

To check the workload cluster, get the workload cluster credentials and run a test workload:

-

If your workload cluster was created with

eksctl, change your credentials to point to the new workload cluster (for example,w01), then run the test application with:export CLUSTER_NAME=w01 export KUBECONFIG=${PWD}/${CLUSTER_NAME}/${CLUSTER_NAME}-eks-a-cluster.kubeconfig kubectl apply -f "https://anywhere.eks.amazonaws.com/manifests/hello-eks-a.yaml" -

If your workload cluster was created with GitOps or Terraform, you can get credentials and run the test application as follows:

kubectl get secret -n eksa-system w01-kubeconfig -o jsonpath=‘{.data.value}' | base64 —decode > w01.kubeconfig export KUBECONFIG=w01.kubeconfig kubectl apply -f "https://anywhere.eks.amazonaws.com/manifests/hello-eks-a.yaml"NOTE: For Docker, you must modify the

serverfield of the kubeconfig file by replacing the IP with127.0.0.1and the port with its value. The port’s value can be found by runningdocker psand checking the workload cluster’s load balancer.

-

-

Add more workload clusters:

To add more workload clusters, go through the same steps for creating the initial workload, copying the config file to a new name (such as

eksa-w02-cluster.yaml), modifying resource names, and running the create cluster command again.

Next steps:

-

See the Cluster management

section for more information on common operational tasks like scaling and deleting the cluster.

-

See the Package management

section for more information on post-creation curated packages installation.

To verify that a cluster control plane is up and running, use the kubectl command to show that the control plane pods are all running.

kubectl get po -A -l control-plane=controller-manager

NAMESPACE NAME READY STATUS RESTARTS AGE

capi-kubeadm-bootstrap-system capi-kubeadm-bootstrap-controller-manager-57b99f579f-sd85g 2/2 Running 0 47m

capi-kubeadm-control-plane-system capi-kubeadm-control-plane-controller-manager-79cdf98fb8-ll498 2/2 Running 0 47m

capi-system capi-controller-manager-59f4547955-2ks8t 2/2 Running 0 47m

capi-webhook-system capi-controller-manager-bb4dc9878-2j8mg 2/2 Running 0 47m

capi-webhook-system capi-kubeadm-bootstrap-controller-manager-6b4cb6f656-qfppd 2/2 Running 0 47m

capi-webhook-system capi-kubeadm-control-plane-controller-manager-bf7878ffc-rgsm8 2/2 Running 0 47m

capi-webhook-system capv-controller-manager-5668dbcd5-v5szb 2/2 Running 0 47m

capv-system capv-controller-manager-584886b7bd-f66hs 2/2 Running 0 47m

You may also check the status of the cluster control plane resource directly. This can be especially useful to verify clusters with multiple control plane nodes after an upgrade.

kubectl get kubeadmcontrolplanes.controlplane.cluster.x-k8s.io

NAME INITIALIZED API SERVER AVAILABLE VERSION REPLICAS READY UPDATED UNAVAILABLE

supportbundletestcluster true true v1.20.7-eks-1-20-6 1 1 1

To verify that the expected number of cluster worker nodes are up and running, use the kubectl command to show that nodes are Ready.

This will confirm that the expected number of worker nodes are present. Worker nodes are named using the cluster name followed by the worker node group name (example: my-cluster-md-0)

kubectl get nodes

NAME STATUS ROLES AGE VERSION

supportbundletestcluster-md-0-55bb5ccd-mrcf9 Ready <none> 4m v1.20.7-eks-1-20-6

supportbundletestcluster-md-0-55bb5ccd-zrh97 Ready <none> 4m v1.20.7-eks-1-20-6

supportbundletestcluster-mdrwf Ready control-plane,master 5m v1.20.7-eks-1-20-6

To test a workload in your cluster you can try deploying the hello-eks-anywhere

.

2.4 - Preparing needed for hosting EKS Anywhere on vSphere

Certain resources must be in place with appropriate user permissions to create an EKS Anywhere cluster using the vSphere provider.

Configuring Folder Resources

Create a VM folder:

For each user that needs to create workload clusters, have the vSphere administrator create a VM folder. That folder will host:

- The VMs of the Control plane and Data plane nodes of each cluster.

- A nested folder for the management cluster and another one for each workload cluster.

- Each cluster VM in its own nested folder under this folder.

Follow these steps to create the user’s vSphere folder:

- From vCenter, select the Menus/VM and Template tab.

- Select either a datacenter or another folder as a parent object for the folder that you want to create.

- Right-click the parent object and click New Folder.

- Enter a name for the folder and click OK.

For more details, see the vSphere Create a Folder

documentation.

Configuring vSphere User, Group, and Roles

You need a vSphere user with the right privileges to let you create EKS Anywhere clusters on top of your vSphere cluster.

Configure via EKSA CLI

To configure a new user via CLI, you will need two things:

- a set of vSphere admin credentials with the ability to create users and groups. If you do not have the rights to create new groups and users, you can invoke govc commands directly as outlined here.

- a

user.yamlfile:

apiVersion: "eks-anywhere.amazon.com/v1"

kind: vSphereUser

spec:

username: "eksa" # optional, default eksa

group: "MyExistingGroup" # optional, default EKSAUsers

globalRole: "MyGlobalRole" # optional, default EKSAGlobalRole

userRole: "MyUserRole" # optional, default EKSAUserRole

adminRole: "MyEKSAAdminRole" # optional, default EKSACloudAdminRole

datacenter: "MyDatacenter"

vSphereDomain: "vsphere.local" # this should be the domain used when you login, e.g. YourUsername@vsphere.local

connection:

server: "https://my-vsphere.internal.acme.com"

insecure: false

objects:

networks:

- !!str "/MyDatacenter/network/My Network"

datastores:

- !!str "/MyDatacenter/datastore/MyDatastore2"

resourcePools:

- !!str "/MyDatacenter/host/Cluster-03/MyResourcePool" # NOTE: see below if you do not want to use a resource pool

folders:

- !!str "/MyDatacenter/vm/OrgDirectory/MyVMs"

templates:

- !!str "/MyDatacenter/vm/Templates/MyTemplates"

NOTE: if you do not want to create a resource pool, you can instead specify the cluster directly as /MyDatacenter/host/Cluster-03 in user.yaml, where Cluster-03 is your cluster name. In your cluster spec, you will need to specify /MyDatacenter/host/Cluster-03/Resources for the resourcePool field.

Set the admin credentials as environment variables:

export EKSA_VSPHERE_USERNAME=<ADMIN_VSPHERE_USERNAME>

export EKSA_VSPHERE_PASSWORD=<ADMIN_VSPHERE_PASSWORD>

If the user does not already exist, you can create the user and all the specified group and role objects by running:

eksctl anywhere exp vsphere setup user -f user.yaml --password '<NewUserPassword>'

If the user or any of the group or role objects already exist, use the force flag instead to overwrite Group-Role-Object mappings for the group, roles, and objects specified in the user.yaml config file:

eksctl anywhere exp vsphere setup user -f user.yaml --force

Please note that there is one more manual step to configure global permissions here

.

Configure via govc

If you do not have the rights to create a new user, you can still configure the necessary roles and permissions using the govc cli

.

#! /bin/bash

# govc calls to configure a user with minimal permissions

set -x

set -e

EKSA_USER='<Username>@<UserDomain>'

USER_ROLE='EKSAUserRole'

GLOBAL_ROLE='EKSAGlobalRole'

ADMIN_ROLE='EKSACloudAdminRole'

FOLDER_VM='/YourDatacenter/vm/YourVMFolder'

FOLDER_TEMPLATES='/YourDatacenter/vm/Templates'

NETWORK='/YourDatacenter/network/YourNetwork'

DATASTORE='/YourDatacenter/datastore/YourDatastore'

RESOURCE_POOL='/YourDatacenter/host/Cluster-01/Resources/YourResourcePool'

govc role.create "$GLOBAL_ROLE" $(curl https://raw.githubusercontent.com/aws/eks-anywhere/main/pkg/config/static/globalPrivs.json | jq .[] | tr '\n' ' ' | tr -d '"')

govc role.create "$USER_ROLE" $(curl https://raw.githubusercontent.com/aws/eks-anywhere/main/pkg/config/static/eksUserPrivs.json | jq .[] | tr '\n' ' ' | tr -d '"')

govc role.create "$ADMIN_ROLE" $(curl https://raw.githubusercontent.com/aws/eks-anywhere/main/pkg/config/static/adminPrivs.json | jq .[] | tr '\n' ' ' | tr -d '"')

govc permissions.set -group=false -principal "$EKSA_USER" -role "$GLOBAL_ROLE" /

govc permissions.set -group=false -principal "$EKSA_USER" -role "$ADMIN_ROLE" "$FOLDER_VM"

govc permissions.set -group=false -principal "$EKSA_USER" -role "$ADMIN_ROLE" "$FOLDER_TEMPLATES"

govc permissions.set -group=false -principal "$EKSA_USER" -role "$USER_ROLE" "$NETWORK"

govc permissions.set -group=false -principal "$EKSA_USER" -role "$USER_ROLE" "$DATASTORE"

govc permissions.set -group=false -principal "$EKSA_USER" -role "$USER_ROLE" "$RESOURCE_POOL"

NOTE: if you do not want to create a resource pool, you can instead specify the cluster directly as /MyDatacenter/host/Cluster-03 in user.yaml, where Cluster-03 is your cluster name. In your cluster spec, you will need to specify /MyDatacenter/host/Cluster-03/Resources for the resourcePool field.

Please note that there is one more manual step to configure global permissions here

.

Configure via UI

Add a vCenter User

Ask your VSphere administrator to add a vCenter user that will be used for the provisioning of the EKS Anywhere cluster in VMware vSphere.

- Log in with the vSphere Client to the vCenter Server.

- Specify the user name and password for a member of the vCenter Single Sign-On Administrators group.

- Navigate to the vCenter Single Sign-On user configuration UI.

- From the Home menu, select Administration.

- Under Single Sign On, click Users and Groups.

- If vsphere.local is not the currently selected domain, select it from the drop-down menu. You cannot add users to other domains.

- On the Users tab, click Add.

- Enter a user name and password for the new user.

- The maximum number of characters allowed for the user name is 300.

- You cannot change the user name after you create a user. The password must meet the password policy requirements for the system.

- Click Add.

For more details, see vSphere Add vCenter Single Sign-On Users

documentation.

Create and define user roles

When you add a user for creating clusters, that user initially has no privileges to perform management operations. So you have to add this user to groups with the required permissions, or assign a role or roles with the required permission to this user.

Three roles are needed to be able to create the EKS Anywhere cluster:

-

Create a global custom role: For example, you could name this EKS Anywhere Global. Define it for the user on the vCenter domain level and its children objects. Create this role with the following privileges:

> Content Library * Add library item * Check in a template * Check out a template * Create local library * Update files > vSphere Tagging * Assign or Unassign vSphere Tag * Assign or Unassign vSphere Tag on Object * Create vSphere Tag * Create vSphere Tag Category * Delete vSphere Tag * Delete vSphere Tag Category * Edit vSphere Tag * Edit vSphere Tag Category * Modify UsedBy Field For Category * Modify UsedBy Field For Tag > Sessions * Validate session -

Create a user custom role: The second role is also a custom role that you could call, for example, EKSAUserRole. Define this role with the following objects and children objects.

- The pool resource level and its children objects. This resource pool that our EKS Anywhere VMs will be part of.

- The storage object level and its children objects. This storage that will be used to store the cluster VMs.

- The network VLAN object level and its children objects. This network that will host the cluster VMs.

- The VM and Template folder level and its children objects.

Create this role with the following privileges:

> Content Library * Add library item * Check in a template * Check out a template * Create local library > Datastore * Allocate space * Browse datastore * Low level file operations > Folder * Create folder > vSphere Tagging * Assign or Unassign vSphere Tag * Assign or Unassign vSphere Tag on Object * Create vSphere Tag * Create vSphere Tag Category * Delete vSphere Tag * Delete vSphere Tag Category * Edit vSphere Tag * Edit vSphere Tag Category * Modify UsedBy Field For Category * Modify UsedBy Field For Tag > Network * Assign network > Resource * Assign virtual machine to resource pool > Scheduled task * Create tasks * Modify task * Remove task * Run task > Profile-driven storage * Profile-driven storage view > Storage views * View > vApp * Import > Virtual machine * Change Configuration - Add existing disk - Add new disk - Add or remove device - Advanced configuration - Change CPU count - Change Memory - Change Settings - Configure Raw device - Extend virtual disk - Modify device settings - Remove disk * Edit Inventory - Create from existing - Create new - Remove * Interaction - Power off - Power on * Provisioning - Clone template - Clone virtual machine - Create template from virtual machine - Customize guest - Deploy template - Mark as template - Read customization specifications * Snapshot management - Create snapshot - Remove snapshot - Revert to snapshot -

Create a default Administrator role: The third role is the default system role Administrator that you define to the user on the folder level and its children objects (VMs and OVA templates) that was created by the VSphere admistrator for you.

To create a role and define privileges check Create a vCenter Server Custom Role

pages.

Manually set Global Permissions role in Global Permissions UI

vSphere does not currently support a public API for setting global permissions. Because of this, you will need to manually assign the Global Role you created to your user or group in the Global Permissions UI.

Deploy an OVA Template

If the user creating the cluster has permission and network access to create and tag a template, you can skip these steps because EKS Anywhere will automatically download the OVA and create the template if it can. If the user does not have the permissions or network access to create and tag the template, follow this guide. The OVA contains the operating system (Ubuntu, Bottlerocket, or RHEL) for a specific EKS Distro Kubernetes release and EKS Anywhere version. The following example uses Ubuntu as the operating system, but a similar workflow would work for Bottlerocket or RHEL.

Steps to deploy the OVA

- Go to the artifacts

page and download or build the OVA template with the newest EKS Distro Kubernetes release to your computer.

- Log in to the vCenter Server.

- Right-click the folder you created above and select Deploy OVF Template. The Deploy OVF Template wizard opens.

- On the Select an OVF template page, select the Local file option, specify the location of the OVA template you downloaded to your computer, and click Next.

- On the Select a name and folder page, enter a unique name for the virtual machine or leave the default generated name, if you do not have other templates with the same name within your vCenter Server virtual machine folder. The default deployment location for the virtual machine is the inventory object where you started the wizard, which is the folder you created above. Click Next.

- On the Select a compute resource page, select the resource pool where to run the deployed VM template, and click Next.

- On the Review details page, verify the OVF or OVA template details and click Next.

- On the Select storage page, select a datastore to store the deployed OVF or OVA template and click Next.

- On the Select networks page, select a source network and map it to a destination network. Click Next.

- On the Ready to complete page, review the page and click Finish. For details, see Deploy an OVF or OVA Template

To build your own Ubuntu OVA template check the Building your own Ubuntu OVA section in the following link

.

To use the deployed OVA template to create the VMs for the EKS Anywhere cluster, you have to tag it with specific values for the os and eksdRelease keys.

The value of the os key is the operating system of the deployed OVA template, which is ubuntu in our scenario.

The value of the eksdRelease holds kubernetes and the EKS-D release used in the deployed OVA template.

Check the following Customize OVAs

page for more details.

Steps to tag the deployed OVA template:

- Go to the artifacts

page and take notes of the tags and values associated with the OVA template you deployed in the previous step.

- In the vSphere Client, select Menu > Tags & Custom Attributes.

- Select the Tags tab and click Tags.

- Click New.

- In the Create Tag dialog box, copy the

ostag name associated with your OVA that you took notes of, which in our case isos:ubuntuand paste it as the name for the first tag required. - Specify the tag category

osif it exist or create it if it does not exist. - Click Create.

- Repeat steps 2-4.

- In the Create Tag dialog box, copy the

ostag name associated with your OVA that you took notes of, which in our case iseksdRelease:kubernetes-1-21-eks-8and paste it as the name for the second tag required. - Specify the tag category

eksdReleaseif it exist or create it if it does not exist. - Click Create.

- Navigate to the VM and Template tab.

- Select the folder that was created.

- Select deployed template and click Actions.

- From the drop-down menu, select Tags and Custom Attributes > Assign Tag.

- Select the tags we created from the list and confirm the operation.

To run EKS Anywhere, you will need:

Prepare Administrative machine

Set up an Administrative machine as described in Install EKS Anywhere

.

Prepare a VMware vSphere environment

To prepare a VMware vSphere environment to run EKS Anywhere, you need the following:

-

A vSphere 7+ environment running vCenter

-

Capacity to deploy 6-10 VMs

-

running in vSphere environment in the primary VM network for your workload cluster

-

One network in vSphere to use for the cluster. EKS Anywhere clusters need access to vCenter through the network to enable self-managing and storage capabilities.

-

An OVA

imported into vSphere and converted into a template for the workload VMs

-

User credentials to create VMs and attach networks, etc

-

One IP address routable from cluster but excluded from DHCP offering. This IP address is to be used as the Control Plane Endpoint IP

Below are some suggestions to ensure that this IP address is never handed out by your DHCP server.

You may need to contact your network engineer.

- Pick an IP address reachable from cluster subnet which is excluded from DHCP range OR

- Alter DHCP ranges to leave out an IP address(s) at the top and/or the bottom of the range OR

- Create an IP reservation for this IP on your DHCP server. This is usually accomplished by adding a dummy mapping of this IP address to a non-existent mac address.

Each VM will require:

- 2 vCPUs

- 8GB RAM

- 25GB Disk

The administrative machine and the target workload environment will need network access to:

- vCenter endpoint (must be accessible to EKS Anywhere clusters)

- public.ecr.aws

- anywhere-assets.eks.amazonaws.com (to download the EKS Anywhere binaries, manifests and OVAs)

- distro.eks.amazonaws.com (to download EKS Distro binaries and manifests)

- d2glxqk2uabbnd.cloudfront.net (for EKS Anywhere and EKS Distro ECR container images)

- api.ecr.us-west-2.amazonaws.com (for EKS Anywhere package authentication matching your region)

- d5l0dvt14r5h8.cloudfront.net (for EKS Anywhere package ECR container images)

- api.github.com (only if GitOps is enabled)

vSphere information needed before creating the cluster

You need to get the following information before creating the cluster:

-

Static IP Addresses: You will need one IP address for the management cluster control plane endpoint, and a separate one for the controlplane of each workload cluster you add.

Let’s say you are going to have the management cluster and two workload clusters. For those, you would need three IP addresses, one for each. All of those addresses will be configured the same way in the configuration file you will generate for each cluster.

A static IP address will be used for each control plane VM in your EKS Anywhere cluster. Choose IP addresses in your network range that do not conflict with other VMs and make sure they are excluded from your DHCP offering.

An IP address will be the value of the property

controlPlaneConfiguration.endpoint.hostin the config file of the management cluster. A separate IP address must be assigned for each workload cluster.

-

vSphere Datacenter Name: The vSphere datacenter to deploy the EKS Anywhere cluster on.

-

VM Network Name: The VM network to deploy your EKS Anywhere cluster on.

-

vCenter Server Domain Name: The vCenter server fully qualified domain name or IP address. If the server IP is used, the thumbprint must be set or insecure must be set to true.

-

thumbprint (required if insecure=false): The SHA1 thumbprint of the vCenter server certificate which is only required if you have a self-signed certificate for your vSphere endpoint.

There are several ways to obtain your vCenter thumbprint. If you have govc installed

, you can run the following command in the Administrative machine terminal, and take a note of the output:

govc about.cert -thumbprint -k -

template: The VM template to use for your EKS Anywhere cluster. This template was created when you imported the OVA file into vSphere.

-

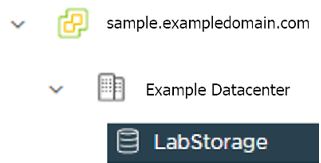

datastore: The vSphere datastore

to deploy your EKS Anywhere cluster on.

-

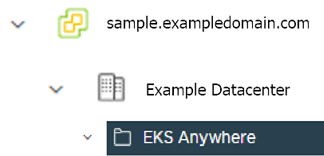

folder: The folder parameter in VSphereMachineConfig allows you to organize the VMs of an EKS Anywhere cluster. With this, each cluster can be organized as a folder in vSphere. You will have a separate folder for the management cluster and each cluster you are adding.

-

resourcePool: The vSphere Resource pools for your VMs in the EKS Anywhere cluster. If there is a resource pool:

/<datacenter>/host/<resource-pool-name>/Resources

2.5 - vSphere cluster

EKS Anywhere supports a vSphere provider for production grade EKS Anywhere deployments. EKS Anywhere allows you to provision and manage Amazon EKS on your own infrastructure.

This document walks you through setting up EKS Anywhere in a way that:

- Deploys an initial cluster on your vSphere environment. That cluster can be used as a self-managed cluster (to run workloads) or a management cluster (to create and manage other clusters)

- Deploys zero or more workload clusters from the management cluster

If your initial cluster is a management cluster, it is intended to stay in place so you can use it later to modify, upgrade, and delete workload clusters. Using a management cluster makes it faster to provision and delete workload clusters. Also it lets you keep vSphere credentials for a set of clusters in one place: on the management cluster. The alternative is to simply use your initial cluster to run workloads.

Important

Creating an EKS Anywhere management cluster is the recommended model. Separating management features into a separate, persistent management cluster provides a cleaner model for managing the lifecycle of workload clusters (to create, upgrade, and delete clusters), while workload clusters run user applications. This approach also reduces provider permissions for workload clusters.Prerequisite Checklist

EKS Anywhere needs to be run on an administrative machine that has certain machine requirements . An EKS Anywhere deployment will also require the availability of certain resources from your VMware vSphere deployment .

Steps

The following steps are divided into two sections:

- Create an initial cluster (used as a management or self-managed cluster)

- Create zero or more workload clusters from the management cluster

Create an initial cluster

Follow these steps to create an EKS Anywhere cluster that can be used either as a management cluster or as a self-managed cluster (for running workloads itself).

All steps listed below should be executed on the admin machine with reachability to the vSphere environment where the EKA Anywhere clusters are created.

-

Generate an initial cluster config (named

mgmt-clusterfor this example):export MGMT_CLUSTER_NAME=mgmt-cluster eksctl anywhere generate clusterconfig $MGMT_CLUSTER_NAME \ --provider vsphere > $MGMT_CLUSTER_NAME.yamlThe command above creates a config file named mgmt-cluster.yaml in the path where it is executed. Refer to vsphere configuration for information on configuring this cluster config for a vSphere provider.

The configuration specification is divided into three sections:

- Cluster

- VSphereDatacenterConfig

- VSphereMachineConfig

Some key considerations and configuration parameters:

-

Create at least two control plane nodes, three worker nodes, and three etcd nodes for a production cluster, to provide high availability and rolling upgrades.

-

osFamily (operating System on virtual machines) parameter in VSphereMachineConfig by default is set to bottlerocket. Permitted values: ubuntu, bottlerocket.

-

The recommended mode of deploying etcd on EKS Anywhere production clusters is unstacked (etcd members have dedicated machines and are not collocated with control plane components). More information here. The generated config file comes with external etcd enabled already. So leave this part as it is.

-

Apart from the base configuration, you can optionally add additional configuration to enable supported EKS Anywhere functionalities.

- OIDC

- etcd (comes by default with the generated config file)

- proxy

- GitOps

- IAM Roles for Service Accounts

- IAM Authenticator

- container registry mirror

As of now, you have to pre-determine which features you want to enable on your cluster before cluster creation. Otherwise, to enable them post-creation will require you to delete and recreate the cluster. However, the next EKS-A release will remove such limitation.

-

To enable managing cluster resources using GitOps, you would need to enable GitOps configurations on the initial/managemet cluster. You can not enable GitOps on workload clusters as long as you have enabled it on the initial/management cluster. And if you want to manage the deployment of Kubernetes resources on a workload cluster, then you would need to bootstrap Flux against your workload cluster manually, to be able deploying Kubernetes resources to this workload cluster using GitOps

-

Modify the initial cluster generated config (

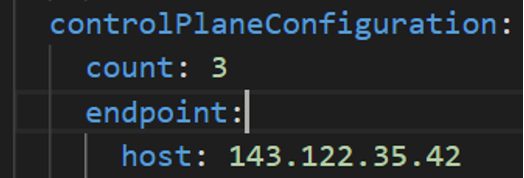

mgmt-cluster.yaml) as follows: You will notice that the generated config file comes with the following fields with empty values. All you need is to fill them with the values we gathered in the prerequisites page.-

Cluster: controlPlaneConfiguration.endpoint.host: ""

controlPlaneConfiguration: count: 3 endpoint: # Fill this value with the IP address you want to use for the management # cluster control plane endpoint. You will also need a separate one for the # controlplane of each workload cluster you add later. host: "" -

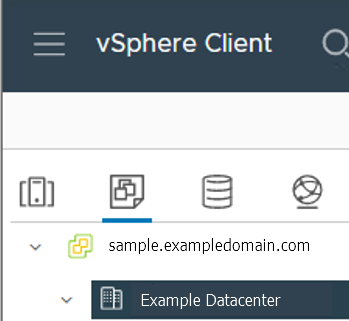

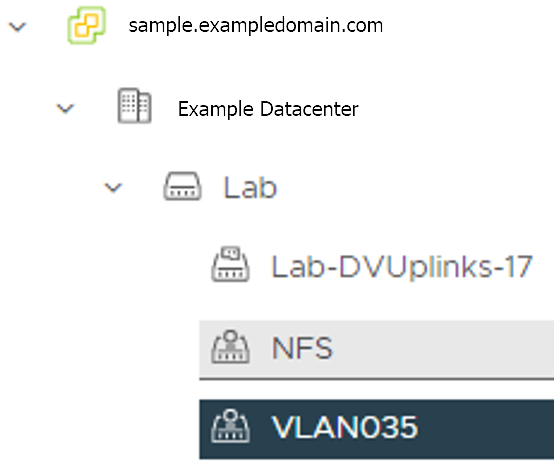

VSphereDatacenterConfig:

datacenter: "" # Fill it with the vSphere Datacenter Name. Example: "Example Datacenter" insecure: false network: "" # Fill it with VM Network Name. Example: "/Example Datacenter/network/VLAN035" server: "" # Fill it with the vCenter Server Domain Name. Example: "sample.exampledomain.com" thumbprint: "" # Fill it with the thumprint of your vCenter server. Example: "BF:B5:D4:C5:72:E4:04:40:F7:22:99:05:12:F5:0B:0E:D7:A6:35:36" -

VSphereMachineConfig sections:

datastore: "" # Fill in the vSphere datastore name: Example "/Example Datacenter/datastore/LabStorage" diskGiB: 25 # Fill in the folder name that the VMs of the cluster will be organized under. # You will have a separate folder for the management cluster and each cluster you are adding. folder: "" # Fill in the foler name Example: /Example Datacenter/vm/EKS Anywhere/mgmt-cluster memoryMiB: 8192 numCPUs: 2 osFamily: ubuntu # You can set it to botllerocket or ubuntu resourcePool: "" # Fill in the vSphere Resource pool. Example: /Example Datacenter/host/Lab/Resources- Remove the

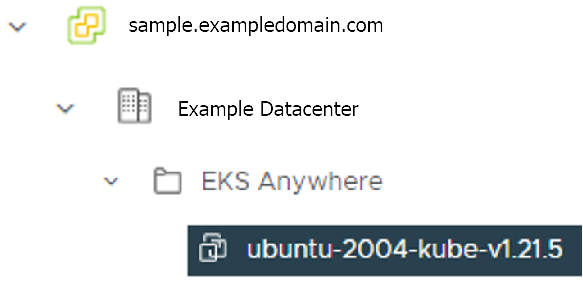

usersproperty, and it will be genrated during the cluster creation automatically. It will set the username tocapvif osFamily=ubuntu, andec2-userif osFamily=botllerocket which is the default option. It will also generate an SSH Key pair, that you can use later to connect to your cluster VMs. - Add template property if you chose to import the EKS-A VM OVA template, and set it to the VM template you imported. Check the vSphere preparation steps

template: /Example Datacenter/vm/EKS Anywhere/ubuntu-2004-kube-v1.21.2 - Remove the

Refer to vsphere configuration for more information on the configuring that can be used for a vSphere provider.

-

-

Set Credential Environment Variables

Before you create the initial/management cluster, you will need to set and export these environment variables for your vSphere user name and password. Make sure you use single quotes around the values so that your shell does not interpret the values

# vCenter User Credentials export GOVC_URL='[vCenter Server Domain Name]' # Example: https://sample.exampledomain.com export GOVC_USERNAME='[vSphere user name]' # Example: USER1@exampledomain export GOVC_PASSWORD='[vSphere password]' export GOVC_INSECURE=true export EKSA_VSPHERE_USERNAME='[vSphere user name]' # Example: USER1@exampledomain export EKSA_VSPHERE_PASSWORD='[vSphere password]' -

Set License Environment Variable

If you are creating a licensed cluster, set and export the license variable (see License cluster if you are licensing an existing cluster):

export EKSA_LICENSE='my-license-here' -

Now you are ready to create a cluster with the basic stettings.

Important

If you plan to enable other compnents such as, GitOps, oidc, IAM Roles for Service Accounts, etc, Skip creating the cluster now and go ahead adding the configuration for those components to your generated config file first. Or you would need to receate the cluster again as mentioned above.After you have finish adding all the configuration needed to your configuration file the

mgmt-cluster.yamland set your credential environment variables, you are ready to create the cluster. Run the create command with the option -v 9 to get the highest level of verbosity, in case you want to troubleshoot any issue happened during the creation of the cluster. You may need also to output it to a file, so you can look at it later.eksctl anywhere create cluster -f $MGMT_CLUSTER_NAME.yaml \ -v 9 > $MGMT_CLUSTER_NAME-$(date "+%Y%m%d%H%M").log 2>&1 -

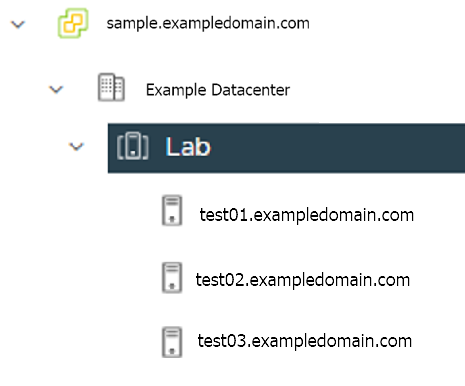

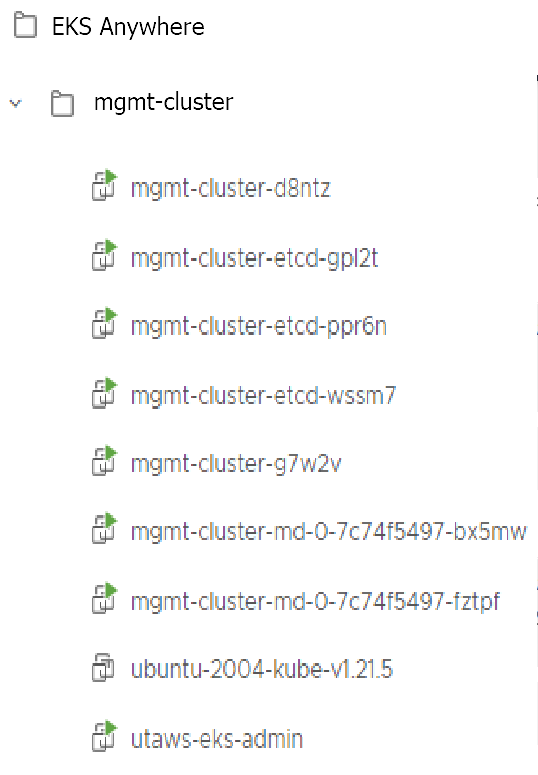

With the completion of the above steps, the management EKS Anywhere cluster is created on the configured vSphere environment under a sub-folder of the

EKS Anywherefolder. You can see the cluster VMs from the vSphere console as below:

-

Once the cluster is created a folder got created on the admin machine with the cluster name which contains the kubeconfig file and the cluster configuration file used to create the cluster, in addition to the generated SSH key pair that you can use to SSH into the VMs of the cluster.

ls mgmt-cluster/Output

eks-a-id_rsa mgmt-cluster-eks-a-cluster.kubeconfig eks-a-id_rsa.pub mgmt-cluster-eks-a-cluster.yaml -

Now you can use your cluster with the generated

KUBECONFIGfile:export KUBECONFIG=${PWD}/${MGMT_CLUSTER_NAME}/${MGMT_CLUSTER_NAME}-eks-a-cluster.kubeconfig kubectl cluster-infoThe cluster endpoint in the output of this command would be the controlPlaneConfiguration.endpoint.host provided in the mgmt-cluster.yaml config file.

-

Check the cluster nodes:

To check that the cluster completed, list the machines to see the control plane, etcd, and worker nodes:

kubectl get machines -AExample command output

NAMESPACE NAME PROVIDERID PHASE VERSION eksa-system mgmt-b2xyz vsphere:/xxxxx Running v1.21.2-eks-1-21-5 eksa-system mgmt-etcd-r9b42 vsphere:/xxxxx Running eksa-system mgmt-md-8-6xr-rnr vsphere:/xxxxx Running v1.21.2-eks-1-21-5 ...The etcd machine doesn’t show the Kubernetes version because it doesn’t run the kubelet service.

-

Check the initial/management cluster’s CRD:

To ensure you are looking at the initial/management cluster, list the CRD to see that the name of its management cluster is itself:

kubectl get clusters mgmt -o yamlExample command output

... kubernetesVersion: "1.21" managementCluster: name: mgmt workerNodeGroupConfigurations: ...Note

The initial cluster is now ready to deploy workload clusters. However, if you just want to use it to run workloads, you can deploy pod workloads directly on the initial cluster without deploying a separate workload cluster and skip the section on running separate workload clusters.

Create separate workload clusters

Follow these steps if you want to use your initial cluster to create and manage separate workload clusters. All steps listed below should be executed on the same admin machine the management cluster created on.

-

Generate a workload cluster config:

export WORKLOAD_CLUSTER_NAME='w01-cluster' export MGMT_CLUSTER_NAME='mgmt-cluster' eksctl anywhere generate clusterconfig $WORKLOAD_CLUSTER_NAME \ --provider vsphere > $WORKLOAD_CLUSTER_NAME.yamlThe command above creates a file named w01-cluster.yaml with similar contents to the mgmt.cluster.yaml file that was generated for the management cluster in the previous section. It will be generated in the path where it is executed.

Same key considerations and configuration parameters apply to workload cluster as well, that were mentioned above with the initial cluster.

-

Refer to the initial config described earlier for the required and optional settings. Ensure workload cluster object names (

Cluster,vSphereDatacenterConfig,vSphereMachineConfig, etc.) are distinct from management cluster object names. Be sure to set themanagementClusterfield to identify the name of the management cluster. -

Modify the generated workload cluster config parameters same way you did in the generated configuration file of the management cluster. The only differences are with the following fields:

-

controlPlaneConfiguration.endpoint.host: That you will use a different IP address for the Cluster filed

controlPlaneConfiguration.endpoint.hostfor each workload cluster as with the initial cluster. Notice here that you use a different IP address from this one that was used with the management cluster. -

managementCluster.name: By default the value of this field is the same as the cluster name, when you generate the configuration file. But because we want this workload cluster we are adding, to managed by the management cluster, then you need to change that to the management cluster name.

managementCluster: name: mgmt-cluster # the name of the initial/management cluster -

VSphereMachineConfig.folder It’s recommended to have a separate folder path for each cluster you add for organization purposes.

folder: /Example Datacenter/vm/EKS Anywhere/w01-cluster

Other than that all other parameters will be configured the same way.

-

-

Create a workload cluster

Important

If you plan to enable other compnents such as oidc, IAM Roles for Service Accounts, etc, skip creating the cluster now and go ahead adding the configuration for those components to your generated config file first. Or you would need to receate the cluster again. If GitOps have been enabled on the initial/management cluster, you would not have the option to enable GitOps on the workload cluster, as the goal of using GitOps is to centrally manage all of your clusters.To create a new workload cluster from your management cluster run this command, identifying:

- The workload cluster yaml file

- The initial cluster’s credentials (this causes the workload cluster to be managed from the management cluster)

eksctl anywhere create cluster \ -f $WORKLOAD_CLUSTER_NAME.yaml \ --kubeconfig $MGMT_CLUSTER_NAME/$MGMT_CLUSTER_NAME-eks-a-cluster.kubeconfig \ -v 9 > $WORKLOAD_CLUSTER_NAME-$(date "+%Y%m%d%H%M").log 2>&1As noted earlier, adding the

--kubeconfigoption tellseksctlto use the management cluster identified by that kubeconfig file to create a different workload cluster. -

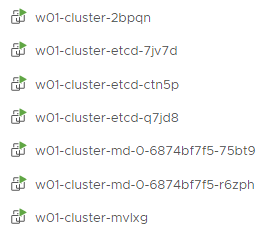

With the completion of the above steps, the management EKS Anywhere cluster is created on the configured vSphere environment under a sub-folder of the

EKS Anywherefolder. You can see the cluster VMs from the vSphere console as below:

-

Once the cluster is created a folder got created on the admin machine with the cluster name which contains the kubeconfig file and the cluster configuration file used to create the cluster, in addition to the generated SSH key pair that you can use to SSH into the VMs of the cluster.

ls w01-cluster/Output

eks-a-id_rsa w01-cluster-eks-a-cluster.kubeconfig eks-a-id_rsa.pub w01-cluster-eks-a-cluster.yaml -

You can list the workload clusters managed by the management cluster.

export KUBECONFIG=${PWD}/${MGMT_CLUSTER_NAME}/${MGMT_CLUSTER_NAME}-eks-a-cluster.kubeconfig kubectl get clusters -

Check the workload cluster:

You can now use the workload cluster as you would any Kubernetes cluster. Change your credentials to point to the kubconfig file of the new workload cluster, then get the cluster info

export KUBECONFIG=${PWD}/${WORKLOAD_CLUSTER_NAME}/${WORKLOAD_CLUSTER_NAME}-eks-a-cluster.kubeconfig kubectl cluster-infoThe cluster endpoint in the output of this command should be the controlPlaneConfiguration.endpoint.host provided in the w01-cluster.yaml config file.

-

To verify that the expected number of cluster worker nodes are up and running, use the kubectl command to show that nodes are Ready.

kubectl get nodes -

Test deploying an application with:

kubectl apply -f "https://anywhere.eks.amazonaws.com/manifests/hello-eks-a.yaml"Verify the test application in the deploy test application section .

-

Add more workload clusters:

To add more workload clusters, go through the same steps for creating the initial workload, copying the config file to a new name (such as

w01-cluster.yaml), modifying resource names, and running the create cluster command again.

See the Cluster management section with more information on common operational tasks like scaling and deleting the cluster.

3 - Curated Packages Workshop

Workshops for using curated packages.

3.1 - Prometheus use cases

Important

- To install Prometheus package, please follow the installation guide.

- To view Prometheus package complete configuration options and their default values, please refer to the prometheus package spec.

3.1.1 - Prometheus with Grafana

This tutorial demonstrates how to config the Prometheus package to scrape metrics from an EKS Anywhere cluster, and visualize them in Grafana.

This tutorial walks through the following procedures:

Install the Prometheus package

The Prometheus package creates two components by default:

- Prometheus-server, which collects metrics from configured targets, and stores the metrics as time series data;

- Node-exporter, which exposes a wide variety of hardware- and kernel-related metrics for prometheus-server (or an equivalent metrics collector, i.e. ADOT collector) to scrape.

The prometheus-server is pre-configured to scrape the following targets at 1m interval:

- Kubernetes API servers

- Kubernetes nodes

- Kubernetes nodes cadvisor

- Kubernetes service endpoints

- Kubernetes services

- Kubernetes pods

- Prometheus-server itself

If no config modification is needed, a user can proceed to the Prometheus installation guide .

Prometheus Package Customization

In this section, we cover a few frequently-asked config customizations. After determining the appropriate customization, proceed to the Prometheus installation guide to complete the package installation. Also refer to Prometheus package spec for additional config options.

Change prometheus-server global configs

By default, prometheus-server is configured with evaluation_interval: 1m, scrape_interval: 1m, scrape_timeout: 10s. Those values can be overwritten if preferred / needed.

The following config allows the user to do such customization:

apiVersion: packages.eks.amazonaws.com/v1alpha1

kind: Package

metadata:

name: generated-prometheus

namespace: eksa-packages-<cluster-name>

spec:

packageName: prometheus

config: |

server:

global:

evaluation_interval: "30s"

scrape_interval: "30s"

scrape_timeout: "15s"

Run prometheus-server as statefulSets

By default, prometheus-server is created as a deployment with replicaCount equals to 1. If there is a need to increase the replicaCount greater than 1, a user should deploy prometheus-server as a statefulSet instead. This allows multiple prometheus-server pods to share the same data storage.

The following config allows the user to do such customization:

apiVersion: packages.eks.amazonaws.com/v1alpha1

kind: Package

metadata:

name: generated-prometheus

namespace: eksa-packages-<cluster-name>

spec:

packageName: prometheus

config: |

server:

replicaCount: 2

statefulSet:

enabled: true

Disable prometheus-server and use node-exporter only

A user may disable the prometheus-server when:

- they would like to use node-exporter to expose hardware- and kernel-related metrics, while

- they have deployed another metrics collector in the cluster and configured a remote-write storage solution, which fulfills the prometheus-server functionality (check out the ADOT with Amazon Managed Prometheus and Amazon Managed Grafana workshop to learn how to do so).

The following config allows the user to do such customization:

apiVersion: packages.eks.amazonaws.com/v1alpha1

kind: Package

metadata:

name: generated-prometheus

namespace: eksa-packages-<cluster-name>

spec:

packageName: prometheus

config: |

server:

enabled: false

Disable node-exporter and use prometheus-server only

A user may disable the node-exporter when:

- they would like to deploy multiple prometheus-server packages for a cluster, while

- deploying only one or none node-exporter instance per node.

The following config allows the user to do such customization:

apiVersion: packages.eks.amazonaws.com/v1alpha1

kind: Package

metadata:

name: generated-prometheus

namespace: eksa-packages-<cluster-name>

spec:

packageName: prometheus

config: |

nodeExporter:

enabled: false

Prometheus Package Test

To ensure the Prometheus package is installed correctly in the cluster, a user can perform the following tests.

Access prometheus-server web UI

Port forward Prometheus to local host 9090:

export PROM_SERVER_POD_NAME=$(kubectl get pods --namespace <namespace> -l "app=prometheus,component=server" -o jsonpath="{.items[0].metadata.name")

kubectl port-forward $PROM_SERVER_POD_NAME -n <namespace> 9090

Go to http://localhost:9090 to access the web UI.

Run sample queries

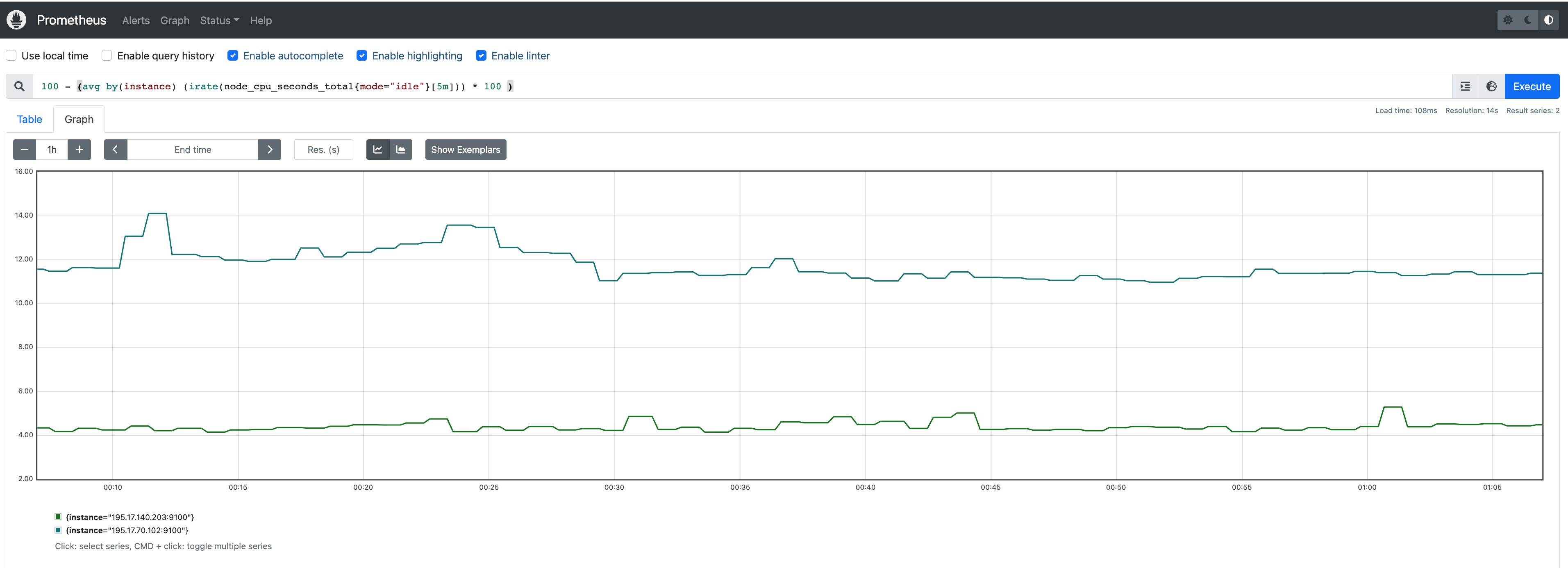

Run sample queries in Prometheus web UI to confirm the targets have been configured properly. For example, a user can run the following query to obtain the CPU utilization rate by node.

100 - (avg by(instance) (irate(node_cpu_seconds_total{mode="idle"}[5m])) * 100 )

The output will be displayed on the Graph tab.

Install Grafana helm charts

A user can install Grafana in the cluster to visualize the Prometheus metrics. We used the Grafana helm chart as an example below, though other deployment methods are also possible.

-

Get helm chart repo info

helm repo add grafana https://grafana.github.io/helm-charts helm repo update -

Install the helm chart

helm install my-grafana grafana/grafana

Set up Grafana dashboards

Access Grafana web UI

-

Obtain Grafana login password:

kubectl get secret --namespace default my-grafana -o jsonpath="{.data.admin-password}" | base64 --decode; echo -

Port forward Grafana to local host

3000:export GRAFANA_POD_NAME=$(kubectl get pods --namespace default -l "app.kubernetes.io/name=grafana,app.kubernetes.io/instance=my-grafana" -o jsonpath="{.items[0].metadata.name}") kubectl --namespace default port-forward $GRAFANA_POD_NAME 3000 -

Go to http://localhost:3000 to access the web UI. Log in with username

admin, and password obtained from the Obtain Grafana login password in step 1 above.

Add Prometheus data source

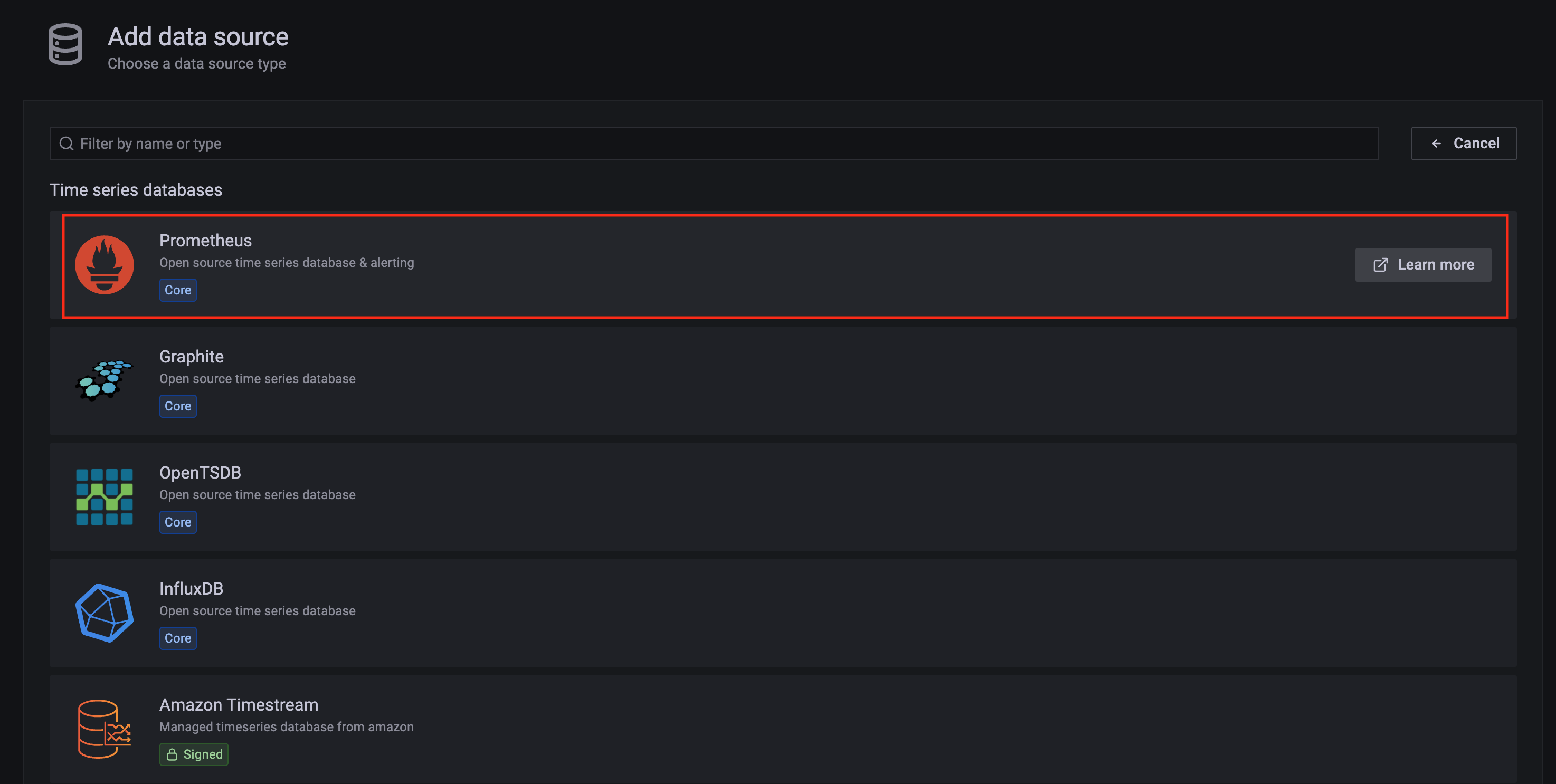

-

Click on the

Configurationsign on the left navigation bar, selectData sources, then choosePrometheusas theData source.

-

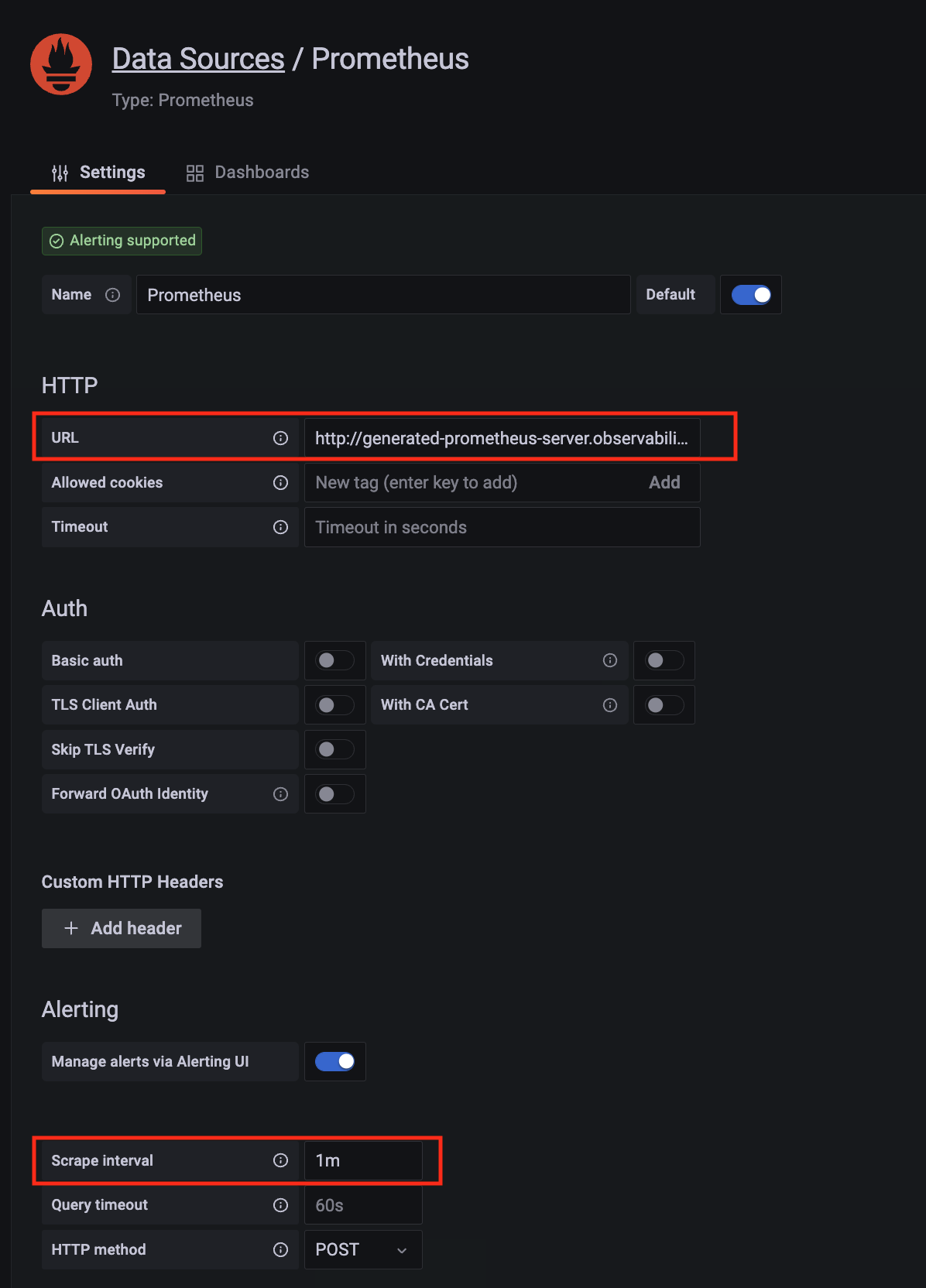

Configure Prometheus data source with the following details:

- Name:

Prometheusas an example. - URL:

http://<prometheus-server-end-point-name>.<namespace>:9090. If the package default values are used, this will behttp://generated-prometheus-server.observability:9090. - Scrape interval:

1mor the value specified by user in the package config. - Select

Save and test. A notificationdata source is workingshould be displayed.

- Name:

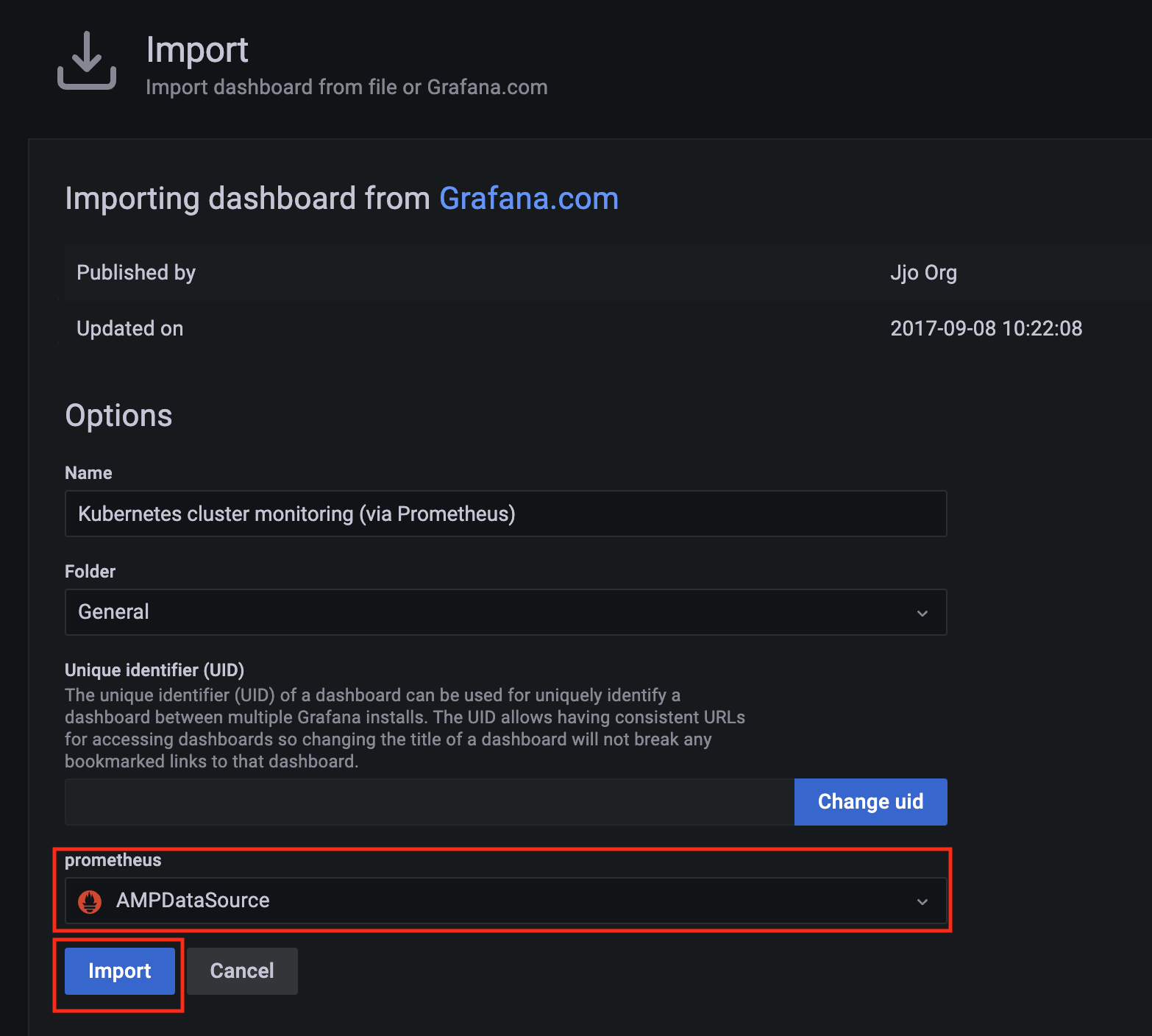

Import dashboard templates

-

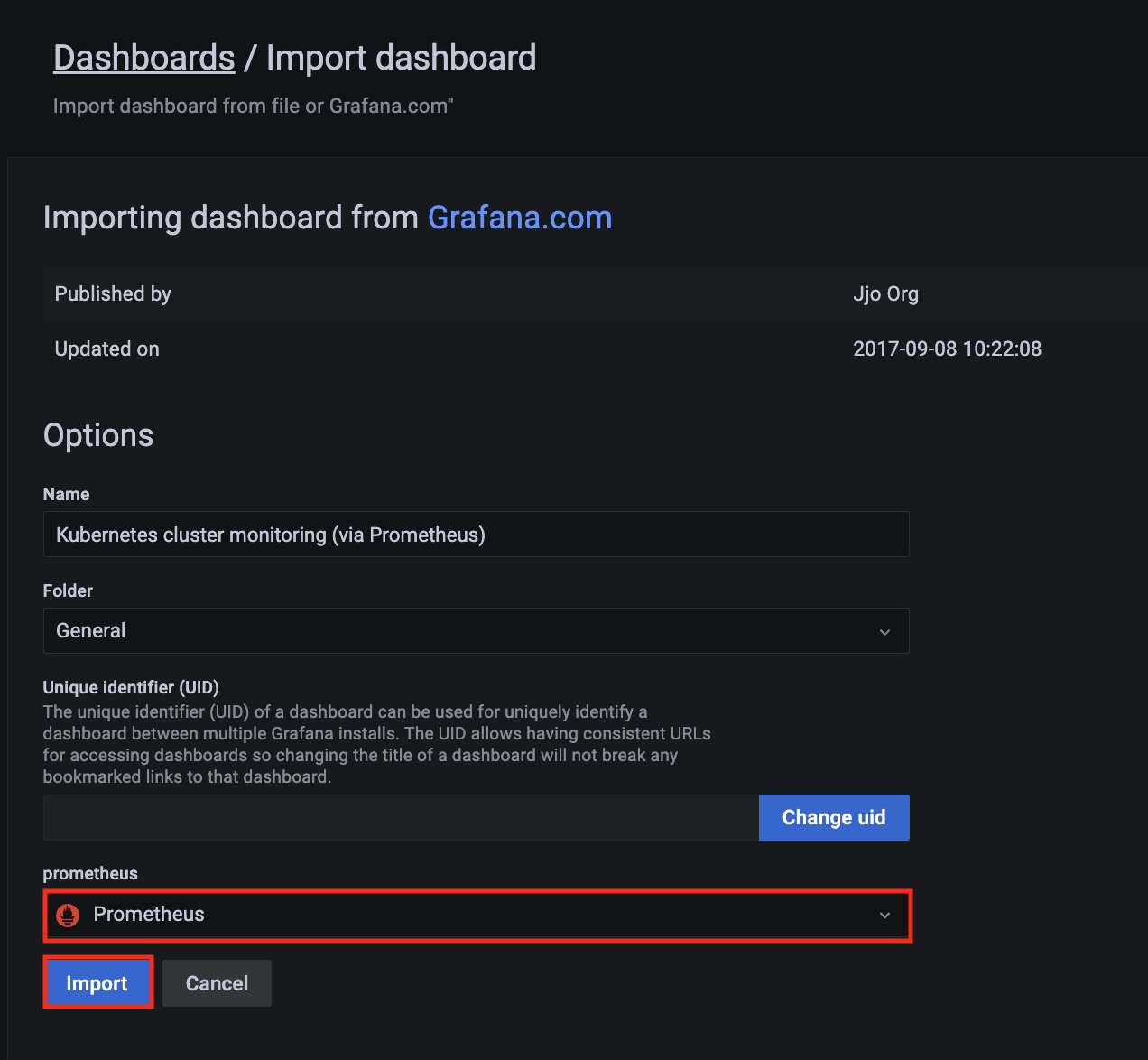

Import a dashboard template by hovering over to the

Dashboardsign on the left navigation bar, and click onImport. Type315in theImport via grafana.comtextbox and selectImport. From the dropdown at the bottom, selectPrometheusand selectImport.

-

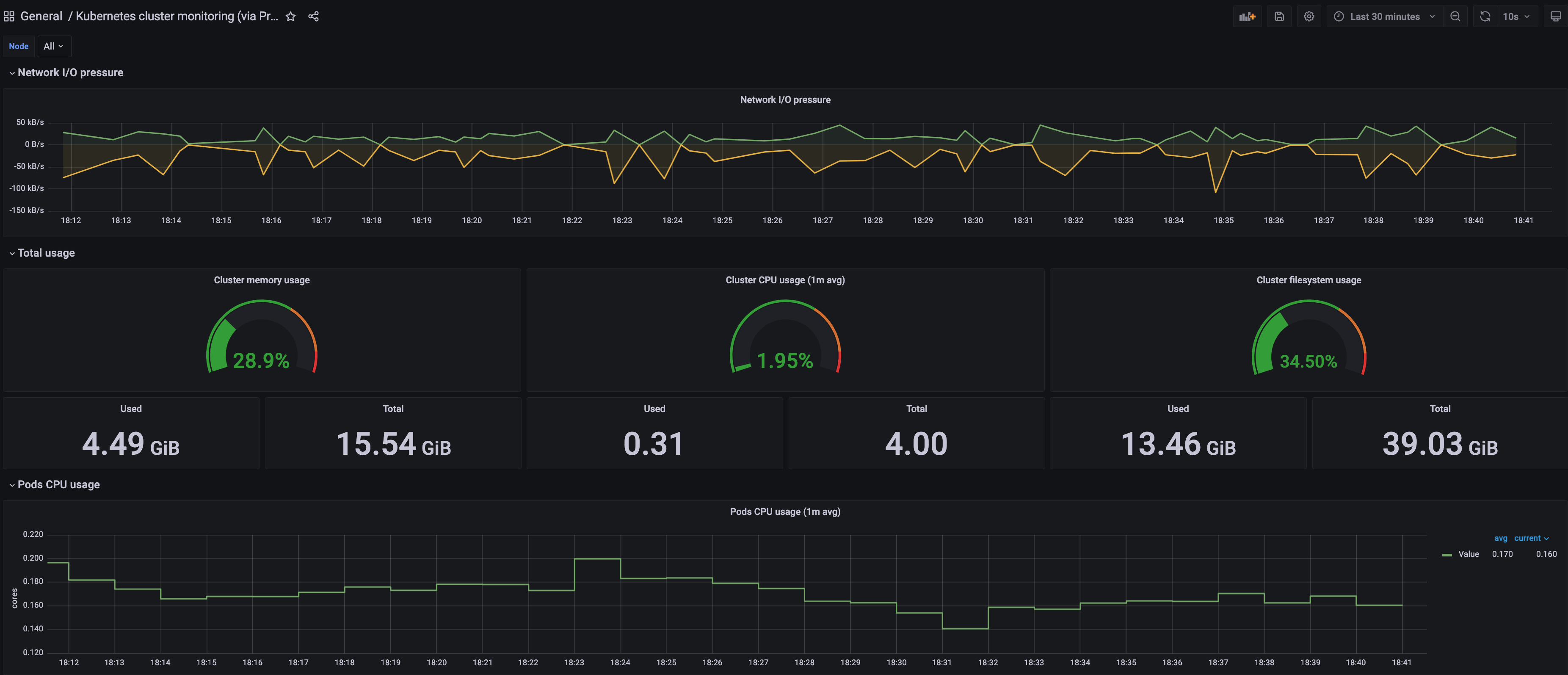

A

Kubernetes cluster monitoring (via Prometheus)dashboard will be displayed.

-

Perform the same procedure for template

1860. ANode Exporter Fulldashboard will be displayed.

3.2 - ADOT use cases

Important

To install ADOT package, please follow the installation guide.3.2.1 - ADOT with AMP and AMG

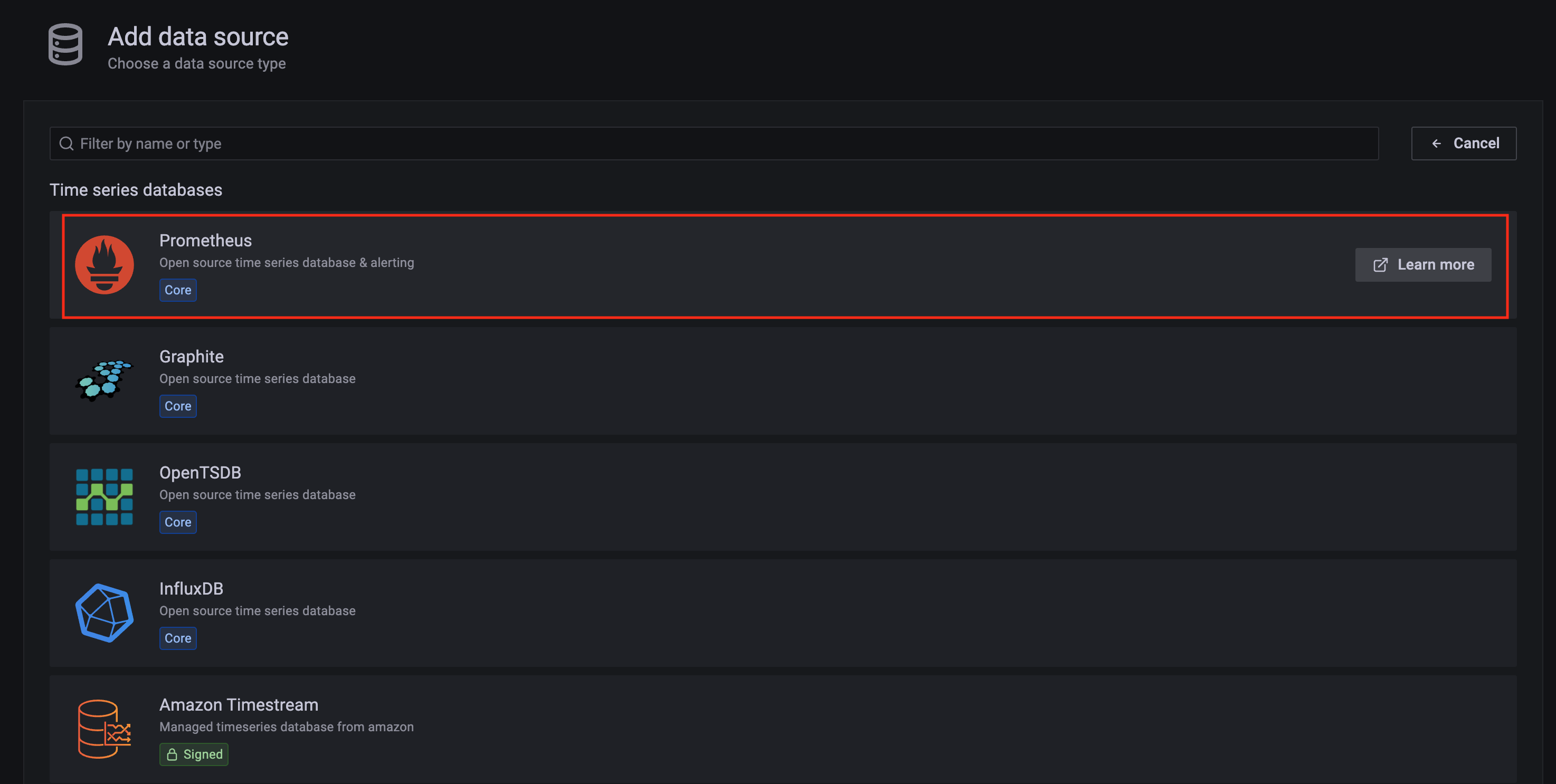

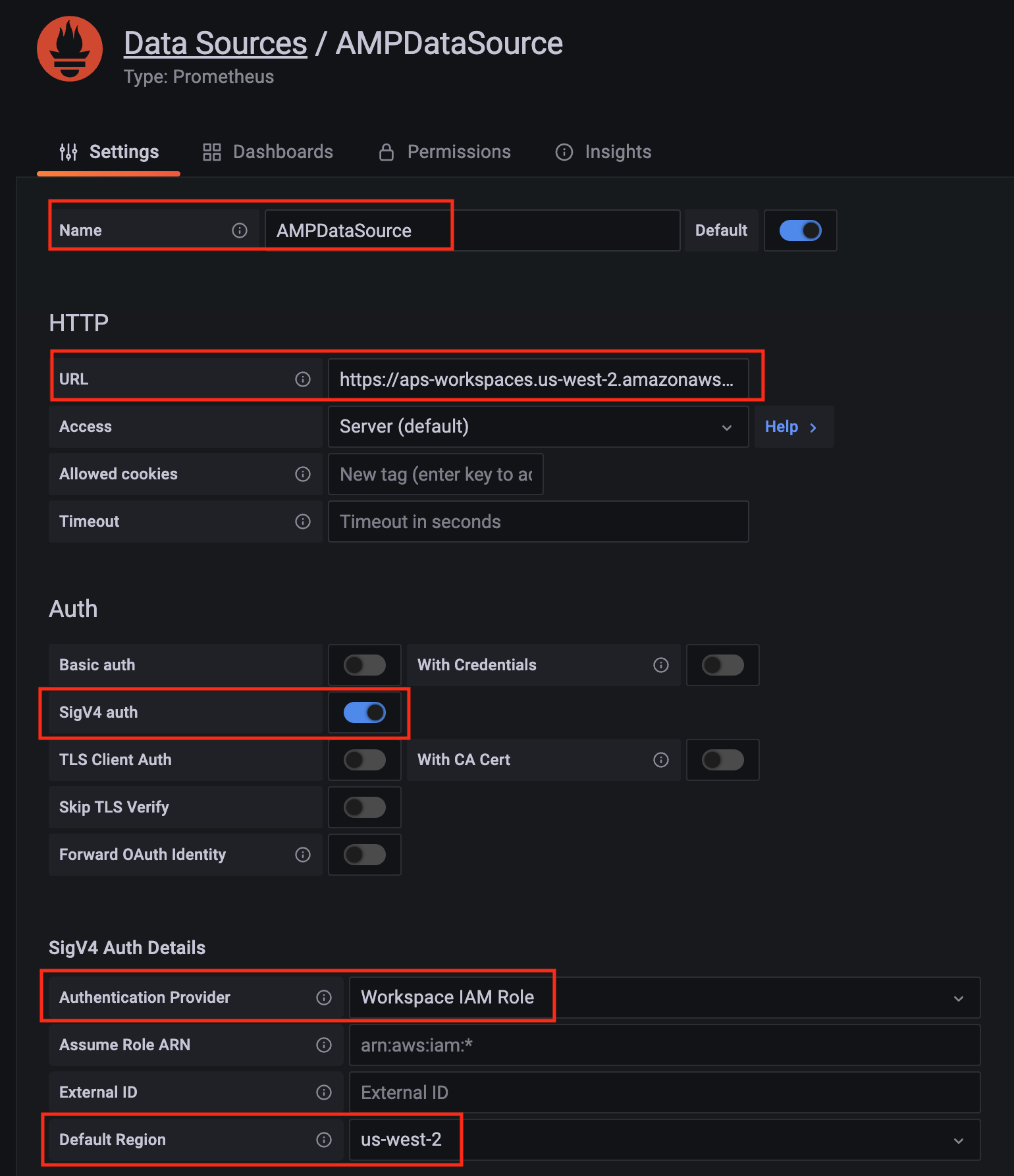

This tutorial demonstrates how to config the ADOT package to scrape metrics from an EKS Anywhere cluster, and send them to Amazon Managed Service for Prometheus (AMP) and Amazon Managed Grafana (AMG).

This tutorial walks through the following procedures:

- Create an AMP workspace ;

- Create a cluster with IAM Roles for Service Account (IRSA) ;

- Install the ADOT package ;

- Create an AMG workspace and connect to the AMP workspace .

Note

- We included

Testsections below for critical steps to help users to validate they have completed such procedure properly. We recommend going through them in sequence as checkpoints of the progress. - We recommend creating all resources in the

us-west-2region.

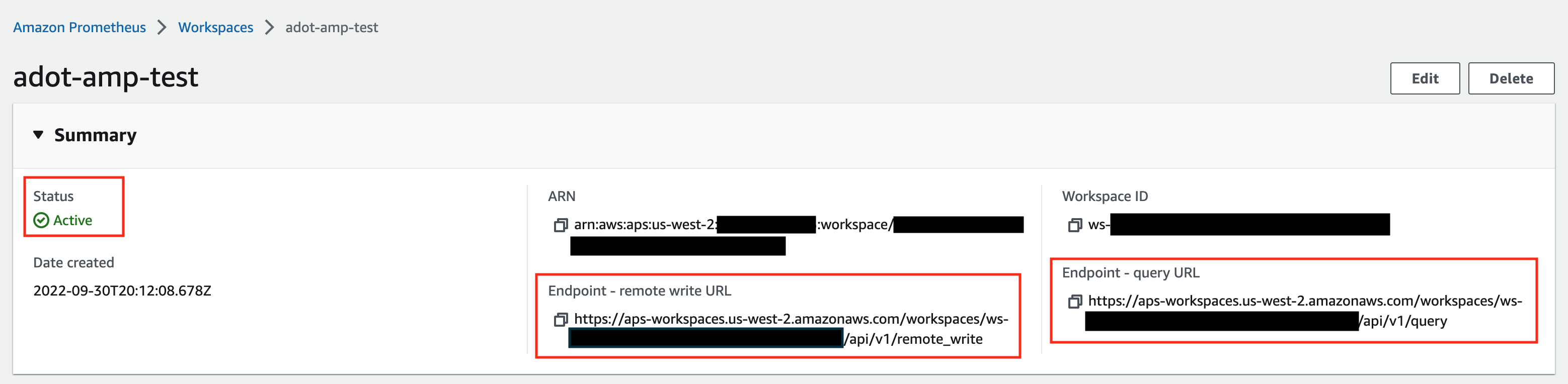

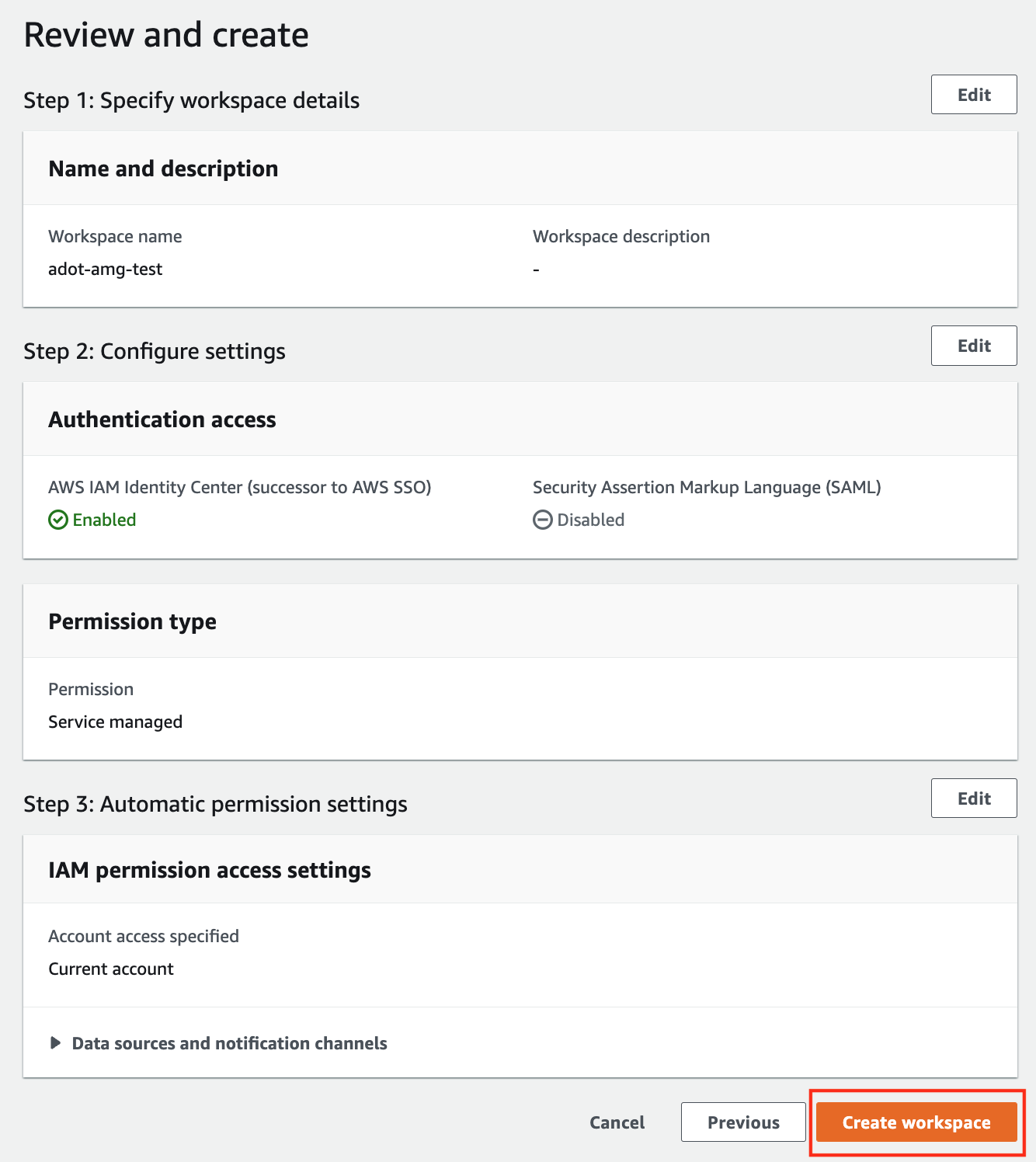

Create an AMP workspace

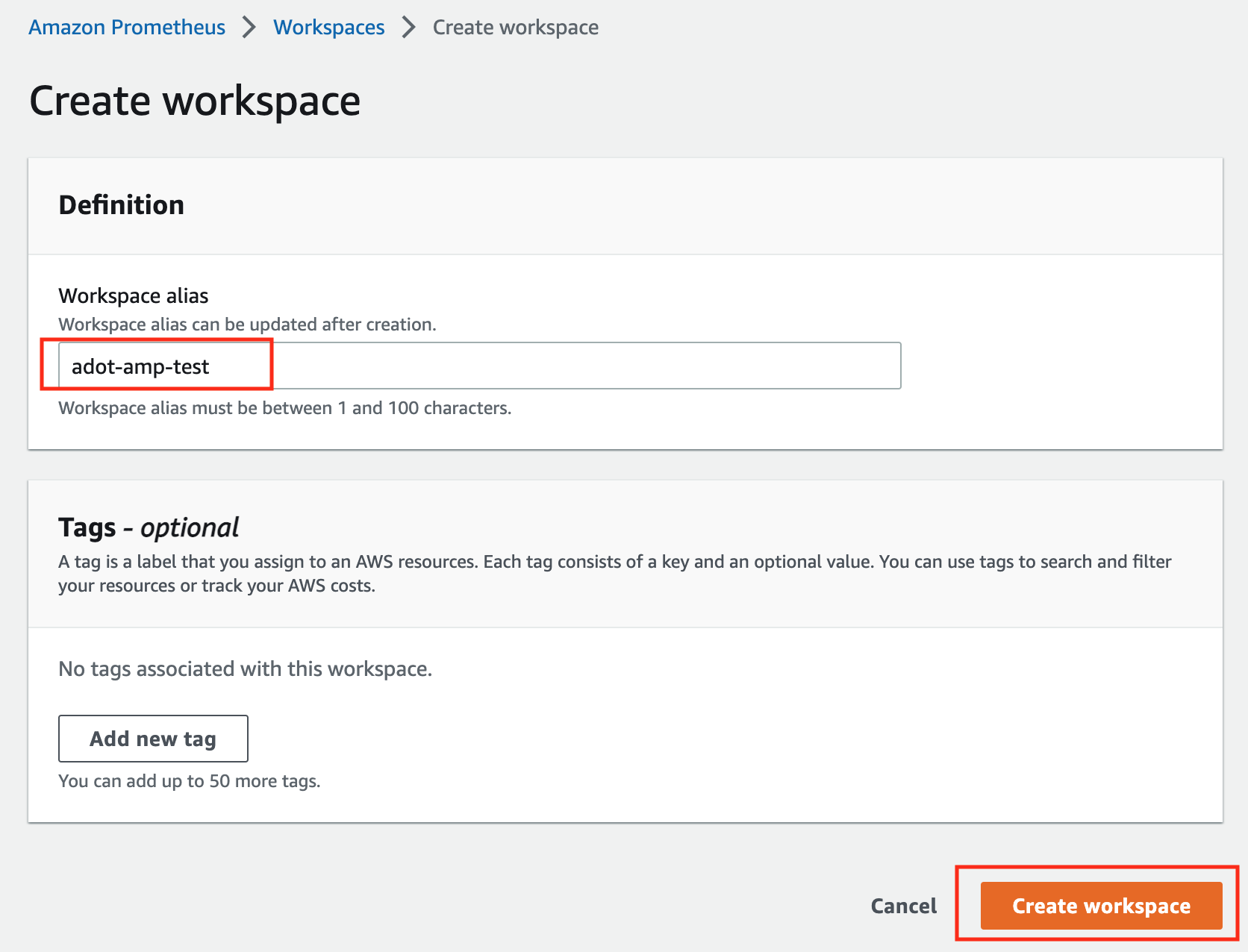

An AMP workspace is created to receive metrics from the ADOT package, and respond to query requests from AMG. Follow steps below to complete the set up:

-

Open the AMP console at https://console.aws.amazon.com/prometheus/.

-

Choose region

us-west-2from the top right corner. -

Click on

Createto create a workspace. -

Type a workspace alias (

adot-amp-testas an example), and click onCreate workspace.

-

Make notes of the URLs displayed for

Endpoint - remote write URLandEndpoint - query URL. You’ll need them when you configure your ADOT package to remote write metrics to this workspace and when you query metrics from this workspace. Make sure the workspace’sStatusshowsActivebefore proceeding to the next step.

For additional options (i.e. through CLI) and configurations (i.e. add a tag) to create an AMP workspace, refer to AWS AMP create a workspace guide.

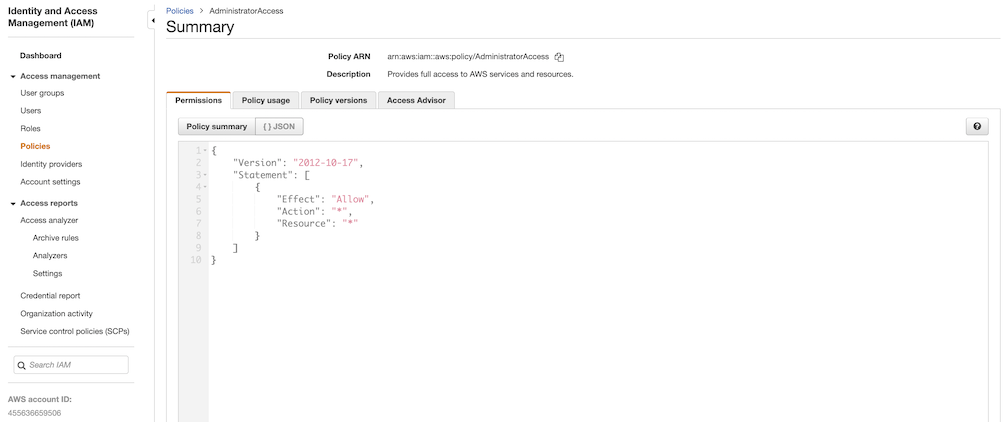

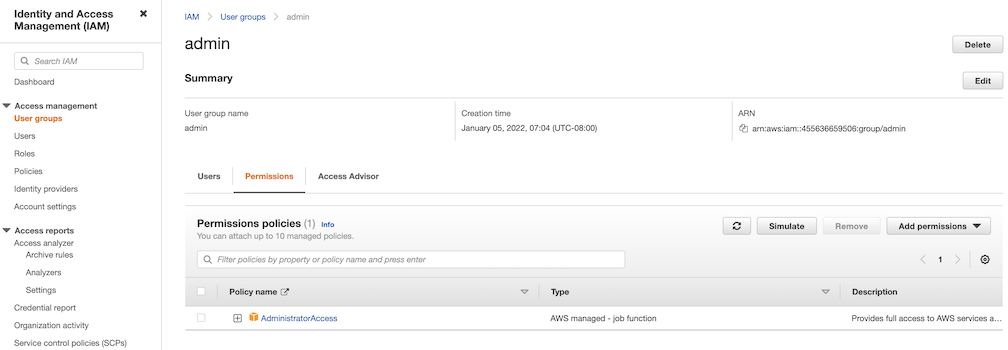

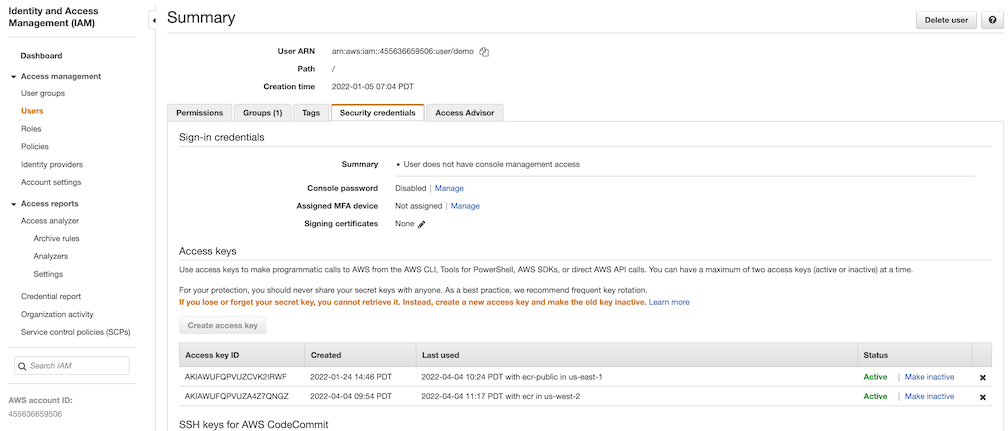

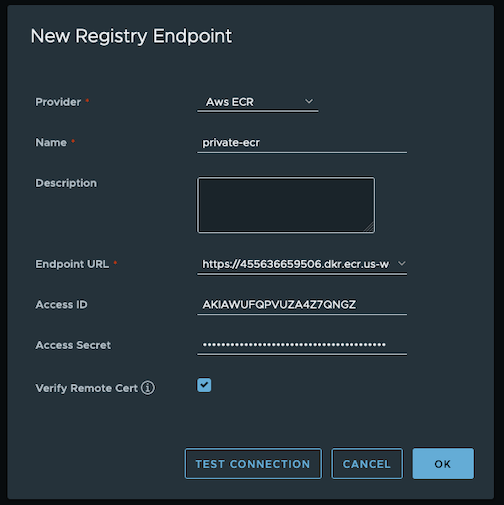

Create a cluster with IRSA

To enable ADOT pods that run in EKS Anywhere clusters to authenticate with AWS services, a user needs to set up IRSA at cluster creation. EKS Anywhere cluster spec for Pod IAM gives step-by-step guidance on how to do so. There are a few things to keep in mind while working through the guide:

-

While completing step Create an OIDC provider , a user should:

-

create the S3 bucket in the

us-west-2region, and -

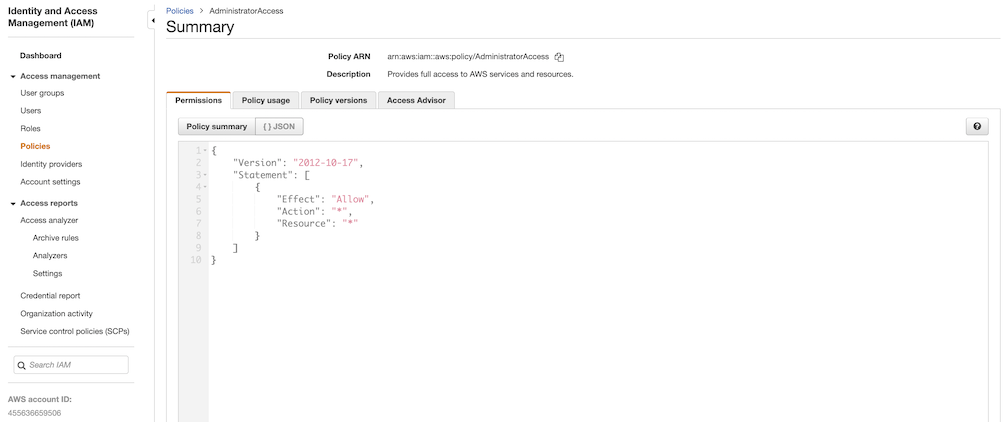

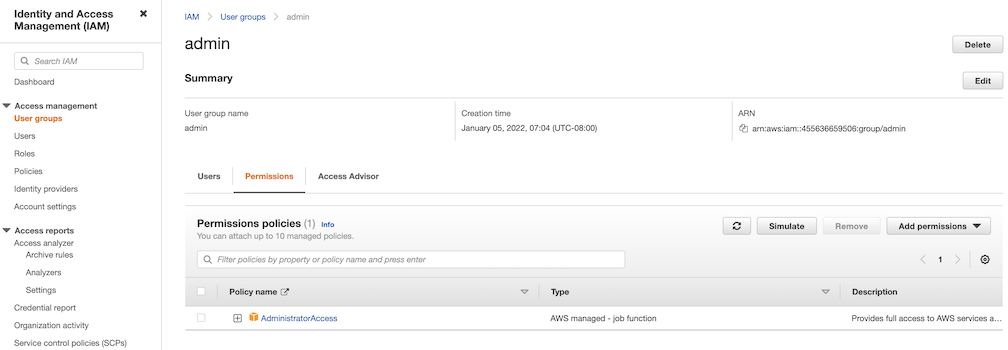

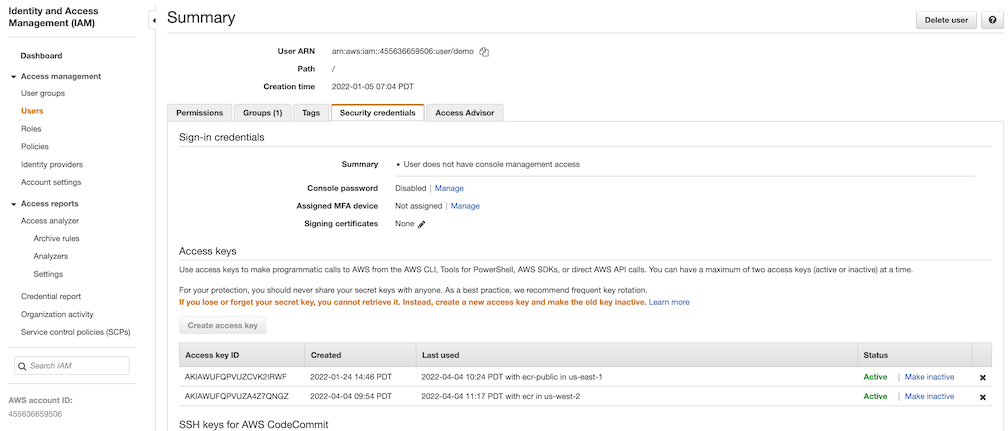

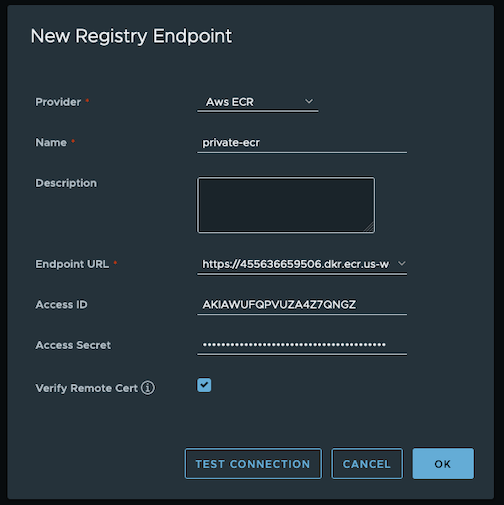

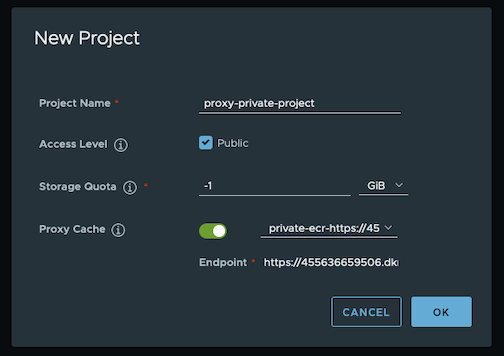

attach an IAM policy with proper AMP access to the IAM role.